{

"cells": [

{

"cell_type": "markdown",

"metadata": {},

"source": [

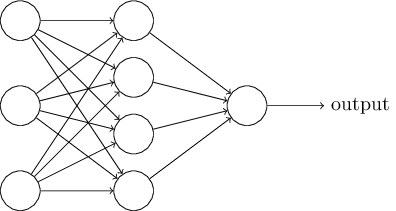

"# Introduction to Neural Networks\n",

"\n",

"A deep feedforward neural network consists of multiple nodes which mimic the biological neurons of a human brain. \n",

"\n",

"**Network:** The model is associated with a directed acyclic graph describing how the functions (layers) are composed together.\n",

"\n",

"**Feedforward:** The information flows from inputs to outputs through the layers of the network.\n",

"\n",

"**Neural:** The inspiration originated from neuroscience. Each element of a layer plays a role analogous to a neuron.\n",

"\n",

"The goal of a feedforward neural network is to approximate some function $\\large f^*$. For example, for a classifier, $\\large y = f^*(x)$ maps an input $\\large x$ to a category $\\large y$. A feedforward network defines a mapping $\\large y = f(x; \\theta)$ and learns the value of the parameters $\\large \\theta$ that result in the best function approximation for $\\large f^*$.\n",

"\n",

"\n",

"\n"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"# Timeline of Deep Learning\n",

"\n",

"Some of the biggest accomplishments and moments in deep learning:\n",

" 1943: First mathematical model of a neural network. \n",

" 1957: Perceptron model. \n",

" 1969: First AI Winter - Perceptron model was incapable of learning the simple XOR function. \n",

" 1986: Backpropagation allowed training of deep networks. \n",

" 1989: Universal approximation theorem. \n",

" 1995: Second AI Winter - Learning didn't scale for larger problems and SVMs became the method of choice. \n",

" 1998: Gradient based learning. \n",

" 2010: Imagenet dataset was created and the annual Imagenet competition kicked off. \n",

" 2012: Neural network model halved existing Imagenet competition error. \n",

" 2014: Generative adversarial neural networks. \n",

" 2017: Google's DeepMind AlphaGo beat world number-one Go player. \n",

"\n",

"\n",

"\n",

"\n",

"\n"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"# Universal Approximation Theorem\n",

"\n",

"**Informal Statement:**\n",

"\n",

"A feedforward network with a single hidden layer containing a finite number of neurons can approximate \"any\" function on $\\large \\mathbb{R}^n$, under mild assumptions on the activation function and the target function.\n",

"\n",

"**Formal Statement:**\n",

"\n",

"\n",

"\n",

"\n",

"Let ${ \\large \\varphi :\\mathbb {R} \\to \\mathbb {R} }$ be a nonconstant, bounded, and continuous function. Let ${\\large I_{m}}$ denote a compact subset of ${\\large \\mathbb{R}^m}$. The space of real-valued continuous functions on ${\\large \\displaystyle I_{m}}$ is denoted by ${\\large \\displaystyle C(I_{m})}$. Then, given any ${\\large \\displaystyle \\varepsilon >0}$ and any function ${\\large \\displaystyle f\\in C(I_{m})}$, there exist an integer ${\\large \\displaystyle N}$, real constants ${\\large \\displaystyle v_{i},b_{i}\\in \\mathbb {R} }$ and real vectors ${\\large \\displaystyle w_{i}\\in \\mathbb {R} ^{m}}$ for ${\\large \\displaystyle i=1,\\ldots ,N}$ such that we may define:\n",

"\n",

"$${\\large \\displaystyle F(x)=\\sum _{i=1}^{N}v_{i}\\varphi \\left(w_{i}^{T}x+b_{i}\\right)}$$\n",

"\n",

"as an approximate realization of the function ${\\large \\displaystyle f}$; that is,\n",

"\n",

"$${\\large \\displaystyle |F(x)-f(x)|<\\varepsilon } $$ \n",

"\n",

"for all ${\\large \\displaystyle x\\in I_{m}}$. In other words, functions of the form ${\\large \\displaystyle F(x)}$ are dense in ${\\large \\displaystyle C(I_{m})}$.\n",

"\n",

"### No Free Lunch Theorem\n",

"\n",

"Averaged over all possible data-generating distributions, every classification algorithm has the same error rate when classifying previously unobserved points. In other words, no machine learning algorithm is **universally** any better than any other. \n",

"\n",

"### Conclusion\n",

"\n",

"Feedforward networks provide a universal system for representing functions. However, there is no universal procedure for examining a training set of specific examples and choosing a function that will **generalize to points not in the training set**.\n"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"# Types of Neural Networks\n",

"\n",

"**Convolutional Neural Networks (CNNs):** \n",

"\n",

" They are translation-invariant, i.e., features are robust to translations. \n",

" Convolutional layers are more efficient than fully-connected (dense) layers for images due to the lower number of parameters needed to represent convolutions. \n",

" Applications: Image classification, object detection, segmnetation, etc. \n",

"\n",

"\n",

"\n",

"\n",

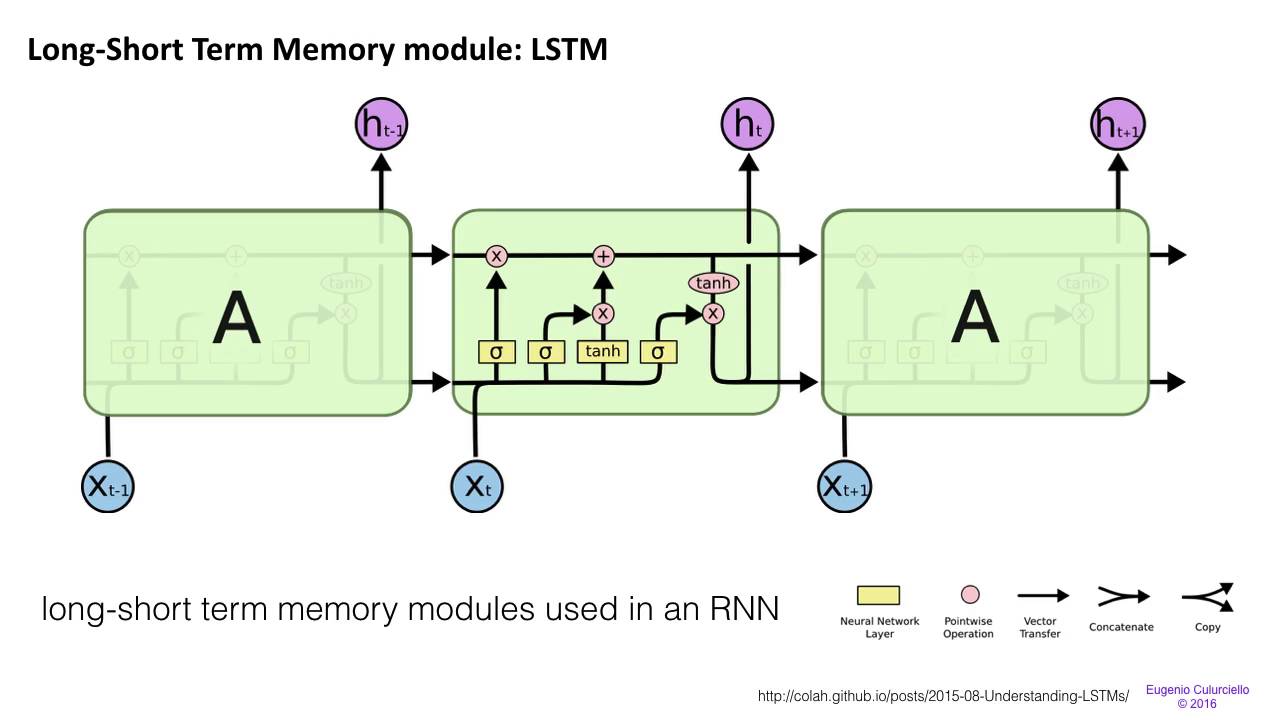

"**Recurrent Neural Networks (RNNs):**\n",

"\n",

"\n",

"\n",

" Unlike feedforward networks, RNNs are networks with loops in them. This allows information to persist (memory). \n",

" Recurrent neural networks are great at processing sequences of data such as sentences and time series. \n",

" Applications: Speech recognition, handwriting recognition, language models, time series analysis, etc. \n",

" Long Short Term Memory (LSTM) networks are exceptional at adding short-term memory features and long-term features.\n",

"\n",

"\n",

"\n",

"\n",

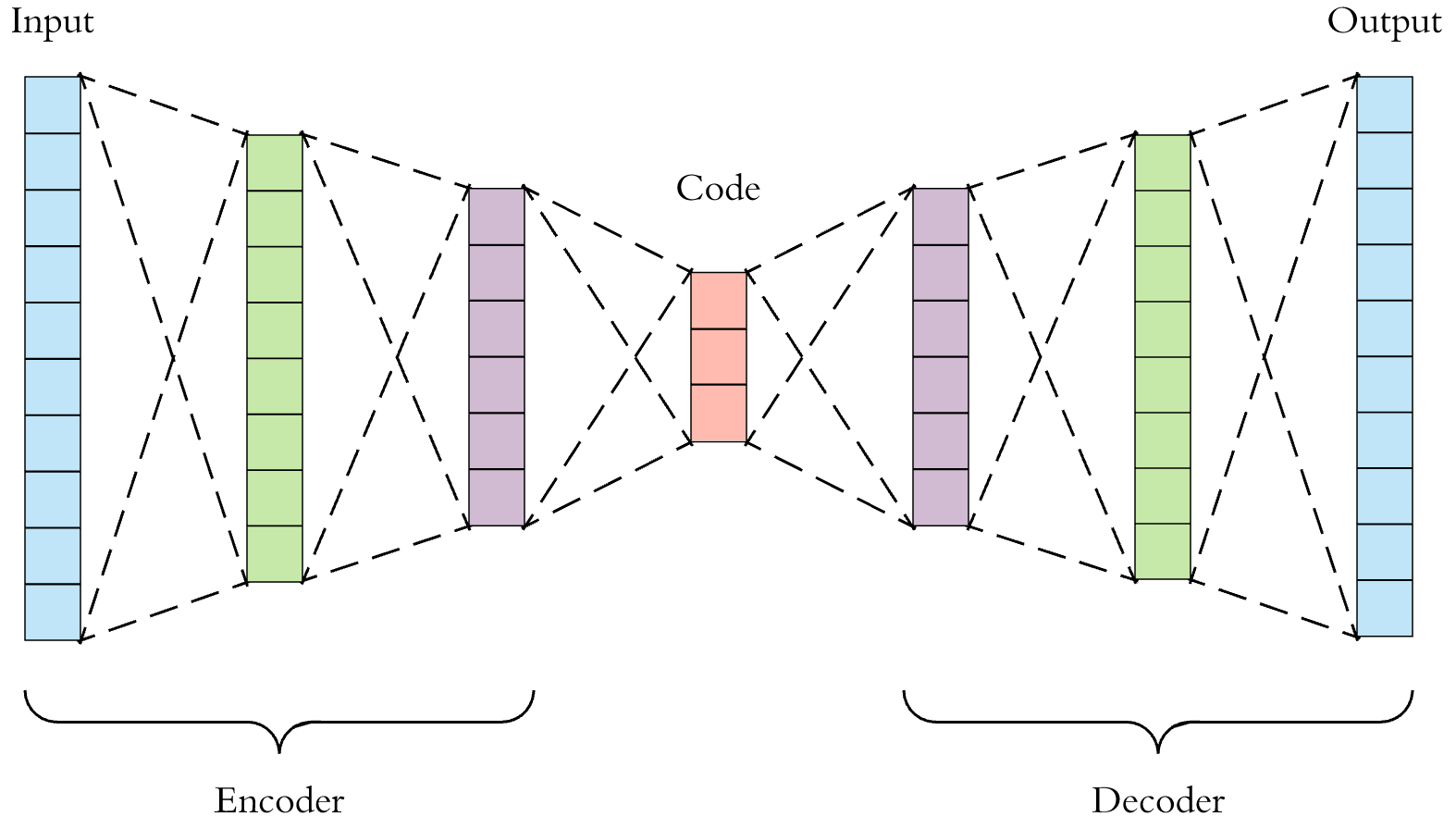

"**Autoencoders:**\n",

"\n",

" Unsupervised method. \n",

" Encoder: Compresses data. Decoder: Reconstructs original data from compressed features. \n",

" Applications: Compression, feature extraction, transfer learning, etc. \n",

"\n",

"\n",

"\n",

"\n",

"\n",

"**Generative Adversarial Neural Networks (GANs):**\n",

"\n",

" Applications: Image (faces, anime characters) generation, style transfer, tranfer learning, etc. \n",

"\n",

"\n",

"\n"

]

},

{

"cell_type": "code",

"execution_count": 1,

"metadata": {},

"outputs": [],

"source": [

"# Package imports\n",

"import numpy as np\n",

"import matplotlib.pyplot as plt\n",

"import sklearn\n",

"import sklearn.datasets\n",

"import sklearn.linear_model\n",

"\n",

"np.random.seed(1) # set a seed so that the results are consistent over iterations"

]

},

{

"cell_type": "code",

"execution_count": 2,

"metadata": {},

"outputs": [],

"source": [

"def plot_decision_boundary(model, X, y, title='', one_flag=False):\n",

" # Set min and max values of decision boundary and add padding\n",

" x_min, x_max = X[0, :].min() - 1, X[0, :].max() + 1\n",

" y_min, y_max = X[1, :].min() - 1, X[1, :].max() + 1\n",

" h = 0.01\n",

" \n",

" # Generate a grid of points with distance h between them\n",

" xx, yy = np.meshgrid(np.arange(x_min, x_max, h), np.arange(y_min, y_max, h))\n",

" \n",

" # Predict the function value for the whole grid\n",

" zz = np.c_[xx.ravel(), yy.ravel()]\n",

" if one_flag: # one feature\n",

" zz = zz.T\n",

" ww = zz[0,:]**2 + zz[1,:]**2\n",

" Z = model(ww.reshape(-1,1))\n",

" else: # two features\n",

" Z = model(zz)\n",

" \n",

" Z = Z.reshape(xx.shape)\n",

" \n",

" # Plot the contour and training examples\n",

" plt.figure(figsize=(6,6))\n",

" plt.contourf(xx, yy, Z, cmap=plt.cm.Spectral, alpha=0.5)\n",

" plt.ylabel('x2')\n",

" plt.xlabel('x1')\n",

" plt.title(title)\n",

" plt.scatter(X[0, :], X[1, :], c=y, cmap=plt.cm.Spectral)\n",

" plt.show()\n",

" \n",

"def sigmoid(x):\n",

" s = 1/(1+np.exp(-x))\n",

" return s"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

"## Build dataset"

]

},

{

"cell_type": "code",

"execution_count": 3,

"metadata": {},

"outputs": [

{

"data": {

"image/png": "iVBORw0KGgoAAAANSUhEUgAAAXYAAAFpCAYAAACI3gMrAAAABHNCSVQICAgIfAhkiAAAAAlwSFlzAAALEgAACxIB0t1+/AAAADl0RVh0U29mdHdhcmUAbWF0cGxvdGxpYiB2ZXJzaW9uIDMuMC4zLCBodHRwOi8vbWF0cGxvdGxpYi5vcmcvnQurowAAIABJREFUeJzsnXd4FWX2xz8zc0t6QiCEFgi99yY9CCJdxd5dbKurrl1/u5bVFXV17Wsvq64FFRtKkSIBpIXeQ01CSA/p5baZ+f1xIXC596be5Ka8n+fxeczcmfc9Q27OvHPec75H0nUdgUAgEDQfZH8bIBAIBALfIhy7QCAQNDOEYxcIBIJmhnDsAoFA0MwQjl0gEAiaGcKxCwQCQTNDOHaBQCBoZgjHLhAIBM0M4dgFAoGgmSEcu0AgEDQzDP6YtE2bNnpsbKw/pvZKaWkpwcHB/jajwRH33bJoqfcNzePet2/fnqvrelRV5/nFscfGxrJt2zZ/TO2V+Ph44uLi/G1GgyPuu2XRUu8bmse9S5KUUp3zRChGIBAImhnCsQsEAkEzQzh2gUAgaGYIxy4QCATNDOHYBQKBoJkhHLtAIBA0M4RjFwgEgmaGcOwCgUDQzBCOXSAQCJoZfqk8FfgPh0Nj19aTHDuUQ6s2QRiCdH+bJBAIfIxw7C2IkmIrzz2+nPxTZVgsDowmhQnTA0jcl0WfAdH+Nk8gEPgIEYppQXz93+3kZJVgsTgAsNtUdB3efDEeh0Pzs3UCgcBXCMfegtjyR7JHB66qOocPZPnBIoFAUB/U2bFLkhQjSdIaSZIOSJK0X5Kkv/rCMIHvUb2syiUJbFa1ga0RCAT1hS9W7A7gIV3X+wEXAH+RJKmfD8YV+Jg+A6JBcj/ucGj06te24Q0SCAT1Qp0du67rGbqu7zj9/8XAQaBjXccV+J7rbxtJQIABRTnr3SUJrrxhCEHBJj9aJhAIfImk675Ld5MkKRZYBwzQdb3ovM/uAO4AiI6OHr5w4UKfzesLSkpKCAkJ8bcZ9Y7DoVGYX47F4sBgkAkMhrCwUH+b1eC0lN/3+bTU+4bmce+TJ0/eruv6iKrO85ljlyQpBFgLLNB1/YfKzh0xYoQuOig1DsR9tyxa6n1D87h3SZKq5dh9khUjSZIR+B74siqnLhAIBIL6xRdZMRLwMXBQ1/VX626SQCAQCOqCL1bs44AbgQslSdp1+r+ZPhhXIBAIBLWgzpICuq7/gcckOoFAIBD4A1F5KhAIBM0M4dgFAoGgmSHUHQWCZsC+XemsWnKIokILg4Z1YOrMPoSEmf1tlsBPCMcuEDRxfvh6N8t+2l+h93MiKY/flx/m2VdnEREZ5GfrBP5AhGIEgiZMXm4pS3/Y5yLiZrdrlBRb+XHhbj9aJvAnwrELBE2YPTvTkWX3pDRV1dm+OdUPFgkaAyIUI2gR2O0qu7enUZhXTvfebfxtjs8wGGSnkpsHFEWs21oqwrELmj0px/N46emVOBwaqqojSTD1klBsNhWTSXE7325XWbUkkbUrj2K3q4wc05lZlw8gNCzAD9ZXztCRnfj03S1ux41GmfEXdvODRYLGgHikC5o1mqrxyrOrKSm2YSl3YLep2KwqFoudnzzEoDVN59/PrOaHr3aTkVZEbnYpK5cc4skHllBaYvXDHVROcIiZP919AUaTUrFCNwcYaN8pnDlXDPSzdQJ/IVbsgmbNoQPZWD10h9J1iF9xhKtuGuZyfP/uDJKOnsJmO3uNw6FRUmRh1ZJDXHL1oHq3uaaMi+tGzz5RrP/9GMWFFvoPbs+w0TEiFNOCEY5d0KwpKfa+yraU292O7d2RhvV0s+9zsds1diSkNkrHDtC2XSiXXzfE32YIGgnikS5o1vTsE4XD4bmfa7ee7puogcEmrytd0WVK0FQQjl3QrImIDOLCi3thMp+zSSo5E0mune/er2DspG7IinuWidlsYMqM3vVpqkDgM4RjFzR7rrt1BDfcNpL2ncIICTUxaGgH2ncKp3sv9xV7dPtQbrh9JEajgtGkYDDImEwK4yZ3ZfgFMX6wXiCoOSLGLmj2SJLEpIt6MuminhXH4uPjvZ4fd1FPhgzvyLbNqdhtKoOHd6RDTHgDWNqwnEjOJzujmA6dwpvl/bVkhGMXYLOpbFp7nM3rkzGbDUy8qAdDR3ZC8lL44gtUVWPHllS2bjyBOcDAhAu706tf23qbr6ZERAYxdWbzDL2UFFt59bnfSU3OR5FlVFWje+8o7v9bHAGBRn+bJ/ABTcaxWy12NsQfZ/f2dCJaBTD54l7Edm/tb7OaPLoOzz2+nMy0IqxWZzbIgT2ZjBjbmdvvG1svzt1hV/nXU6tIScrDanEgSbB5fRJTZvTmmluG+3w+gSvvvfoHycfyUB0a4NxYPpKYzSdvb+buhyf41ziBT2gSjr2k2Mo/Hl5KYUE5NquKJEtsjE/iqpuHcdGsPv42r0lTXGQhI63QRUTKanWwbWMKF07vRY/eUT6fc+2qoyQfP1Uxp66DzaqyeukhxsZ1o3NsK5/PKXCiqjqJ+zJPO/WzOOwa27ecoLzcTqBYtTd5msTm6Y8Ld5N/quysI9B0bDaVbz7dQVFBuZ+ta9qUFttcnPoZrFaVbZtO1Mucf/x+zOOcDofK1g0p9TKnwImmaigGdxkFAFmWKCuxNbBFgvqgSTj2hD9ScJy3wgCQFYnd29P9YFEzwkukRZZB8ZD25wt03ftxzduHAp9gMCromud/Y6NRISIysIEtEtQHTcKxC+qP0DAzJrN7RM5gUBg9PrZe5hwb182j+JbRpDByTOd6mVPgRJJgzhUDXPP6AZNZ4fLrhwgZgmZCk/gtjhrfBcXgbqqqagwe3sEPFjUfQkLN9OobhTnA6dwlyflHftHsPnTuGlkvc8ZN60nHzhEVc4KzAGj85O5iQ7wBmH3FAK6bP4LI1kFIEkRFh3DLXReIAqxmRJPYPL3smsHs2Z5GQf7ZzVOjQeaqm4cRFiFeHevKQ09NYe+OdLZtSsFoNjAurpvH4h1fYTIpPPHCxWz5I4UtG5IxBxiYNLUH/Qe3r7c5BWeRJInJF/di8sW9/G2KoJ5oEo49JNTMc6/PZkP8cfbsSCc8IpDJF/cUqzsfIcsSg0d0ZPCIjg02p8GoMG5yN8ZNFprhAoGvaRKOHcAcYOTC6b25cLp4XRQ0fTRNZ//uDE6mFBAVHcKQER0xGD1nqwgENaXJOHZB1ZSWWFEUWVQPNnJKiqws+Ptv5OWUYndoGI0y5gADf1twMe06hPnbPEEzQDj2ZsDRxBw+eXsTmenFAPTu35bb7h1L66hgP1sm8MQnb28iK70YVXWm8KoODavFwZsvxvP8m3P9bJ2gOdAksmIE3slIK+Slp1eRllqIqmqoqkbiviyefXQZNqt7wwiBf7FZHezenlbh1M+g65CTVUJGWqGfLBM0J4Rjb+Is/fEAdrurA9c0nfJyOwmiirPRYbOpeCvBUmSZslJR+SmoO8KxN3GSjuaiuRflYrU4SEnKa3iDBJUSHGKidZsgj59puk5MF6GTI6g7wrE3cdp1CMeTAKPJrBDdPrTB7dF1nU1rk3j64aU8ePsPfPTmRrIzixvcjsaKJEncdOdot8pbk1nhqpuGeawCFghqinDsTZxZ8/pj9JAmpygyYyY2fI74Fx9t47/vbCb56ClO5ZSyIf44Tz2whMy0oga3pbEycGgHHvvnRQwc2p7wVoF0792GvzwysdnqvwsaHrE8aOJ07dGa2/86lk/f3YKqaugahEUEcO9jkwgOadjmy7nZJaxdcQS7/axyo6bpWCx2vvtiJ/c+NqlB7WnM9OgdxcNPT/W3GYJminDszYBR42IZNrozqcn5GE0KHWPC67X7kTcO7s1yNoK2ux7Xddi/O6PB7REIWirCsTcTDAaZrj38K7EQEGjwGO8HfBo7PpqYw6IvdpKSlEd4q0BmzxvAuMnd/PIwEwgaI8KxC3zG4OGetWaMJoW4aT18MseBPRm8tmBNRaOOslI7n72fQEZaEVfeONQncwgETR2xeSrwGSazgfsej8NsNmAyK8iyhDnAQPdebZhzxUCfzPHFR9vcui/ZrA5+W3yAkmKrT+YQCJo6YsUu8Cn9B7fntY8vZ+umFEqKrPTsE0Wvfm19EiZRVY301AKPnxmMCklHTzFwqNDnFwiEYxf4nOAQE3EX9fT5uLIsYTAq2G3u/VI1TSck1OzzOf1NyvE8jh3OJTwigMHDhQKkoHoIxy5oMkiSxIQLu7N+9VHs9nPKbSUIjwggtnv9dHzyB3a7yusL1nD4YDa67uw/azAoPPbs1Bp1tiovt6OpeoOnvgr8i3DsgibFNX8aTnpqIUlHT6HrOrIiERBg5MEnL2xWWTE/fr2bQweyK95OnBmkDv79zGpe//hy5Cp6k2ZnFvPRW5s4mpgNSHSICWf+Xy6ob7MFjQTh2AVNCrPZwP8tmEbS0VMkHztFq9ZBDBzaodk1YY5fccRjyMlqdXDoQDZ9B7bzem15uZ1nH1tGSbENXdMBndTkfF58ciVX3RZdj1YLGgvCsQuaJF17tPZ73n59Yim3e/2squyfTWuPY7Oop536WRx2laICi0/sEzRumtcyRyBoJnTr6bmZuMOh0aNPVKXXJh3Nw+pBi19VdY/HBc0P4dgFgkbItfOHYzK7K0DGTetFq0jPsr9naN8xzE09EpxZRZ4E4wTND+HYBYJGSPdeUfz9+YsZNKwDwSEm2nUM4/pbR3DDbSOqvHb8hd2dmj3nYTDKhEUE1Ie5gkaGiLELmizl5XYO7c/CYJDp3T+62a1GY7u35qGnptT4urDwAB595iL+89JaSktsSBIYDAq33juGorJj9WCpoLEhHLugSbLmt8N89fE2FIOMroMkwV8emSgqT0/TvVcbXv1wHidTClBVjZjYViiKTHy8cOwtARGKETQ5jibm8NUn27DZVMrL7FjK7ZSX2XnzxXjyTpX527xGgyRJxMS2IrZ762aXDiqoHPHbFjQ5fvv1oFdZgfWrj/rBIoGgcSEcu6DJcSq7FF13P+6wa5zKKW14gwSCRoZw7IImR58B0RiM7l9dc4CBXv3a+sEigaBxIRy7oMkxbXYfTCbFpVuTokiEhpkZNS7Wb3adi67rpBzPY9fWk+TlircIQcMismIEjRJd1zlyMIcjidmEhgUwYkxngoKdCoURkUE8/fJMvvx4K/t3ZSDLEiPGdOa6+SM8FuY0NHmnynjlmdVkZxWjKDJ2u8rocbHceu8YsYkpaBCEYxfUC5qqgSQhyzVXXLTbVV55djXHD5/C7lAxGhW++GgrD/x9coX4VbsOYTz0ZM1zvOsbXdd55dnVpJ8sRNN0wLnJu3VjCm3aBjPvuiH+NVDQIhDLhwbG4dAoLrI4HV8zJOV4Hs89vpz5V3zJbVd+ydsvr6OooLxGY/yyaC9HD+VitTrQVB2rxYHV4uCN5+OxeciGaUycSMonJ7P4tFM/i82msnJJop+sErQ0xIq9gVBVjUVf7GT1ssOoqobJZOCSqwZy8dy+ddIRdzg0Vv56kDW/HcFqdTBkRCcuuXoQka0r1xOpD7Izi1nwt9+wWpxCU6qqs33zCZKOnuLF/8ytdvef35ce8pjOqAN7dqQx4oLOvjTbp+TnlZ3WSne3v6zUjqbptXqLEQhqglixNxD/+yCBVUsPYbU4cNg1ykptfP/VbpYvPljrMXVd57XnfueHr3eTlVFMQV4561Yf5cn7f6Ugr+ELdZb8sN/NIauqTlGhhe1bUqu8Xtd1vv5kG8XFNq+fW8q8y9n6gvy8MrIyitxW3NWlc9dIHHbPbxXtOoQKpy5oEHzi2CVJ+kSSpGxJkvb5YrzmRkmxlT9+P47N6voHb7M6WPzt3lqHZQ4fyObIwRyXcTVVp7zczpIf9tfJ5tpw9FCOR4dotThYvfRQlTriO7aksua3I14/11St0gYTdSEro5h/PLyUh+/8kSfu/5X7b/2enQlVP4zOJ7J1EKPHx7orM5oUrr5luK/MFQgqxVcr9k+B6T4aq9mRlVHkMe8awG5TKSnxvEKtioP7srDZPOhuOzR270ir1Zh1oU3bEK+fHUnM4bG7fyIzvcjrOSt+TfSqF64oEpOm9aR1VHCd7Twfm9XBc48vJ/nYKRx2DZtVpTC/nHf+vZ6ko6dqPN78e8Yw87L+FVk87TqEctfDExg2KsbXpgsEHvFJjF3X9XWSJMX6YqzmSOs2wV5fzyUZgoKMtRo3KNiIwah4jEcHBzd88+KZl/bjwJ4MtzcTcJb7l5bY+OQ/m/jb8xd7vL6yFf2AIe254baRLsd0XefAnkwS92URHGriggldiWgVWGO7t246gdXqcKtmtdtVflm0l/sej6vReIoic9k1g7nsmsEipi7wC2LztAGIiAxiwJAO7N2VjsN+NuxiMitceHGvam8qns/o8bF8+/lOt+Nms4Gps/rUaKzszGK+/XwH+3ZlYDQqTJjSnUuuHoTZXP2vSO/+0Vw3fwRffrQNu4cHma47wzVWq8PjuIOHdyQzrQiHwzU0ZQ4wcNFs101mu13l5X+sIvlYHlaLA6NRZtEXu/jzA+MYMaZLDe4cMk4WVmz4nm9v2onCGo11PsKpC/yBpHsS3ajNQM4V+6+6rg/w8vkdwB0A0dHRwxcuXOiTeX1FSUkJISHeQwl1Rdd1crJKKC+zI0kSuq4THGqmTR1DC2WlNnKySlyO1WTckpISAgOCSEstdImPSxKYzAbadwyrsU0Wi4Os9CKPei4AXbq18pgJpKo66akFqGrVdhTml1OQX+42hyRBTGyrKh3qub/v0hIruVmleDI3KNhE23bV+16UldooKXbqn4eEmgms5ZtYfVLf3/PGTHO498mTJ2/Xdb3KbisNtmLXdf0D4AOAESNG6HFxcQ01dbWIj4+nIWzKzyvjVE4p0e1DCQ3zTTebkmIrO7akYrHYGTCkAx06hVf72vj4eFKPBBG/PN3FoQIEBBi457ERNdY4V1WN+25Z5B5akZw64bfcOtnrtXmnyvhp4W52JpzEaJSZOLUHM+cNcKsofej2H8j1IPgVEGDgxjs7Mz6ue6U2nvv7/u6Lnfz+i/u+vyxLPPXSpCqbZmuqxmsL1nDowKmKlb85wMDgER25+6EJdUpn9TUN9T2vCoddZdGXu/h9+WGsVgfR7UK5bv4IhozsVG9zNpZ7bwhEKKaBaRUZVGXPypoSEmpm4tQetb5+/64MN6cOzpX34QPZNXbsiiJzx/3j+M9La3E4NDRVx2CUMRoV5v9lTKXXRrYOcp7zl8rn8FaopGm6x7BKZeOs/MVz4ZCsSHSMqfohuW1zKocOZLvMa7U42L0tjX27MkTzDw+88+/17NmZXrE/lJVRzNsvr+PexycxaFhHP1vX9PFVuuPXwCagtyRJJyVJutUX4woaBm99MI0mhbDw2r1VDB7ekX++OpsLL+5J/8HtmXVZf158+xI6dY6oi6ku43sKt+jAgCHVd6R5OaXgZUGtKDJ5uVXXA2yIP+7xYWK1ONi8PqnatrQUsjKKXJz6GWw2lW8+2+Enq5oXvsqKudYX4wj8w8Vz+pJ8NM8t1VCSYPSE2FqP265jGDfeMbqO1nlm3nWD2bn1JOXldtTTm61ms4EJU7oT3T602uOEtwrwWkegqlq1mj/XV6AlM62IvFOldOwcQXhEzbN9GivJx/JQFAlPpWYZJ+u2WS1wIkIxAoaNjmHqrN6s+OUgsiJXbO7e8+jEWq/Y65vINsEseGM2y346wO4daYSEmpk2uy8jx9ZMbiAwyMSocV1I2HjCZQVpNCqMHHtWUbIyxk3uxoG9mW6rdrPZwJiJXWtkD0BRoYU3nl/DiaR8FIOMw64yZlI3brlrdLNQh2zdJtjrxrqv9p1aOsKxC5AkiatuGsbUWX04sCcDs9nAoGEdMAc0vqyOc4mIDOLa+SO4dn6VSQKVcstdF2C1quzedhKDUcFhVxk0vAN/uvuCal0/fHQM6/tHk7g/y2XzdOjITvQf3L7G9ry+YA3Jx0459z1OP2w2r08iIjKQy5uBOmT33m1oFRlE9nliaSazwvRL+/nRsuaDcOyCCiJbBzF+cuXZJM0Rk9nAvY9NIj+vjOyMYqLahdZIRE1WZO7/+2R2bT3JpnVJyLLEuMndGDi0Q40zYtJPFpKanO+2mW2zqqz8NZF51w5uVFk2tUGSJB59Ziqv/HM1uVmlyIqEw64yYUp3Lp7T19/mNQuEYxcITlOXjCVZlhg2OoZho+smG5CXW4pikCtW6udiOb2fUNuCtsZE66hgFrwxh9TkfAoLLHTp2oqwZrSP4G+EYxcIGpjszGKyMopp1yGUqGjXjd5OnSO8yk+0ah3ULJz6GSRJonPXSH+b0SwRjl3gNzS7g8y1u7GXWmg3cRDmVtXPZmmKWMrt/OeltSTuz8ZgkHHYNfoOjOaeRydW7GdERAYxalwsWzemuOTqm8wKV9ww1F+mC5oYwrE3IIUF5ZxIyieyTRAdY3yTz91UyVy/h9WXPYXucDovzeZg6D9uZuCj1zS4LWceMI4yK9ETBtbbA+ajtzZxcF8WDrtWkYFzcG8mH/9nM3c/PKHivPn3jCEiMpCVSw5hszqQJGeGTVF+OQ6HhsHgmhlTXm4nNSmfkFAzHapRUCVo/gjH3gBoqsan721hQ/xxjEYFVdVo3zGcB5+YTISPq1AbM5ZThZSkZGFuFcrKWX/DUeLaMm/XP/9Hq4Fd6TSjfnLfPZG5djer5z2FfjqXXbM5GPrsLQx8+GqfzlNaYmXn1lQXETgAu11j+5YTlJXaKlIrDQaZqbP6sGbFYew2pxhZcZGVHxbuZv+eTB566sKKlNRfvtvLL4v2oRhkVFWjbbtQ7v9bnFuIR9CyaPpJsU2AxYv2sWldEg67RnmZHZtVJTU5n1f++bu/TWsQHBYb6256gW9jrmH5hQ/xfe+bUcvdJXodpRb2vfJdg9llOVXIyjl/w5Zfgr2oDHtRGarFxq5/fE7aim1u52t2B1l/7CVz7W5UW806ORUWWDB4yUFXFJniIgsAxUUWDu7N5JtPt2Mtd5UStllVDh/M5khiDgCb1yXzy/f7sNnUiu9VWmohLzyxstn21BVUD7FibwBW/HLQTaNc03Qy04s4kZxP59hWDWJHcZGFlb8msnu7s6An7uKeDTLvpj+/RvL361AtNlRL5U1FStNyG8QmgONfr0H3oJHjKLPw++VPEzNnDIMeu5bIwd1J+20r8dctQFfP/h7Hf/IosfMmuF3viTZtQzyqR4KzcjUiMoj/fZBA/MojGI0K5V5aANqsDhL3ZdGrb1sWf7fX7XulazqlJVb278kUGjUtGOHY65kzDSY84dQiKW0Qx16QV8aTDy6hvNSG/XQ4YN+uDCbPCea/BzZz3fzh9VKQZC0oIembNajWqle4kkGh3YSBPrfBG2VpuR7fHMD59pD0bTwnFm9k7Dv3s+nu13GUuZ677sYXiOgTQ0S/2CrnMpkUZs3rz6/f78d2jnSDyWxg9hUDWbUkkXWrj+Kwa27hmnMxGpWKJir5XvraaprOKQ/Kl4KWgwjF1DOyLHnV87bbVWK6NMxq/ceFeygttlY49XP54/ejvPbcmnqZtywtF9lUvQeGIdDEoMerlh3SHCqOMktdTSPqgr4YQirJndZ01DIrm+59E83hoSuUzc6Bt36q9nxzrxzIlTcMITTMjCRBWHgAV900lNmX92f5z+5vdd4YOc7ZSKSjF0E1CYmYBnoLFDROxIq9AbjqpmF88MYGlz9ck0lh2OgYtx6epSVWFn+7l83rk9GBCybEcslVAwkOMdfJhh1bUj1K8wI4HDrHjuSScjyPLt18m1cc0iUaze5ZRlcyKCgmI6rNTrtJgxn92t2EdnOGD3RdJ/m7tex79TssWfm0mzSYfn+dx/7Xvyfp23h0h0pYz05c8Na9dJgyrFa2xcy6gJAu0RQdSUOrJGbuKLWC5v5A1FWNoqPV7y0rSRLT5vRl2py+btktZ2LsnlAMEgaDgq7p3PXQ+Ar9niuuH8Krz/3u8r0yGGRiYiPo1rNyDXlBw1FSZOWXRXtJ2JiCLEuMv7A7My/tV6+SHcKxNwAjx3ZB13W++Wwnp3JKMAcYmDKjN/PO0/2wWh0888gyTuWUVrSHW730EDsTUnnujTk1alN3Pt6aaZ9BkiROJOW7OPYzXZ9kWaq0UXVlGEMC6X3HbA5/tMQllGEIMtPvr5czfIFnhedtj39I4js/4yh1OryjX67i6BcrkRQF/fSDojDxBKvmPsFFS18gf88xjn62AoAeN02j1x2zMQRULuAlGxRmrn+DbY++z7EvV3sNyyCBEmh2+1wJMBFdy9DR+SmL0e1DyUwv9nje7MsHEBUdwrDRMS6iZH0HtuMvj0zkiw+3kpdbiiRLjB4fy413jGrysgPNhfIyG089tITC06mqAEt+2M/OhJM89dIMt++BrxCOvYEYNS6WUeNicdhVFIPs8Q9v09rjFOSVu/T8dDg0CvLL2Rh/nMkX96r1/BOmdGfpjwc8Nr4G5wZe66izqZeH9mfxwRsbKCq0oOvOEvC7HhxPbPearwRHvvJnlAAjB9/+GV3TkRSZ/g9cztCnb/Z4fll6Lgfe/AHt3Lj86SwPXXNd/avlVlZf8gSaQ0U9/eAoSDzBsS9XMXP9GyhVhIHMESGM++Ahxn3wED8Pv5O8XcdwSUWRJSIHdqMsLRfNZq9Ii0SSUAJN9PnzHDRVJfXXzZz48Q8MwQH0uPliokbVrOfsVTcN473X/nB7qxs0vCOXXTvY63VDRnRi8PCOWMrtGE2GenMUgtoRv+IIxYUWl79pu00lM72InQmpjBxbs/681UV8CxoYg1HxupratS3NTRMdnGluu7ZV/5XfE7PmDSCmS4THlbskS4SEmekzoB3gLHl/5dnfyc0uxWZVnV/EtCJefGIlRQXlbtdXhawojHjxDq7L/ZHLD3/Gdbk/MuyZPyHJnr9+mWv3IJuqv+awF5VVOHUAtcxKwYEUkr9bWyM74756AnPrMAzBzlCHITgAc2QY/e67jKH//BPt4oYgKTKSItN+8hBmb/oPpogQfrvoUdbd+AJHP19B4vu/sOzCB9n+5Cc1mnv4BZ25/b7K65RKAAAgAElEQVSxtI4KRpYlzGYDk6f34s8Pjq/yWkmSCAwyCafeCNmRcNJjt68zHbbqC7Fib0SERwQgyRK65hoLl2SpzrroZrOBJ1+czu7tafz87V5SjudhNClIEnToFMYDf7+woiPRil8TcXjYLHSoGvErjzL3ytqFHxSzieCOUVWeZwwNrHMowVFqIXnRWrpfP7Xa14T3juHKpC9JWriGgsQTyAaFxPd/Yctf3wbpnOKlR65BNjg1WxLf/4XchINnw0ynN1z3v7qIbldPptWA6uuxjxoXy8ixXbDZVIxGpeL3kZFWyKoliWSmF9OzTxQXTu/VKAWzSkts5GQVE9k6qFHa5w9CwzzvjcmnF1P1hXDsjYi4ab3YGJ/k9oQ3GmUunF77MMwZZEVm6KgYho6KobTExomkPE5m7Ofm+VNczvMkGwvOV8iTJwrqbEdVdLhouLN9U3VQ5IowzflUmvHiBWNwIL1unYk1v5hvu1zrVh27Z8GXtB7So6I69sh/l7ulQYIzYyZp0doaOXZwrr7P3UvZmZDKO6+sx2HX0DSdQ/uz+O2Xgzz10gzad2wc8gGaqvHFx1tZt+pYhQbO4BEdueOvYxu9pn99M2VGb/btzHB7E1cMMhOn1L5PcVWIdzc/Yym3s2ppIq/883d+X3aIC2f0wmiUMZsVzGYFo1HmiuuH0LWHb7McgkNM9B3YDqPJXS2wc9dWTunY8zCZlAbJuVfMJqb+/ByGkEAMwQFIiowhJIDIoT0I7d4RQ3AAxrBgJIOCt1Y8hqAAes2fUWsbkhauQfeQCeMos7pUx+oe3mzAufGse1FprC4Oh8YHb2zEZlUrGlLYT1cv//edLXUa25cs+nIX61cfw366AtZudzYtee+1Df42ze/0H9yeaXP6YDQqGE0KptN/09f+aXi96vqIFbsfKS6y8I+HllJUZMFmVZEkZwHKnCsHEdEqAB3n5lhEq4Z9rZ02uy9rVxyt6CV6BsUgM3Fq7VcZjjILRz5dTtI38RhCAul92yw6XzrOY9il3cRBXH3yG5IXrcOSlU/UmH60m+TcRMzfe5yUH/9g78vfuMTWK+wMNNHrjlm0i6t9t6Gy9FyPYwOUnsyp+P9u10+l4OAJjxkzXS6rOj5eGUlHc106DJ1B1+HIwWzsdmfIxp84HBqrlh5yy8G32zX27kijIK+sRekheeKKG4Yy6aIe7NqWhqLIDBsdU+9/08Kx+5Efv95Nfn55hQPVdWen9l++28urH83zW7/RqOgQHnlmCh+8vtFZ3ajrRLcP484HxrnYpNkdnFyeQHlmPlGj+hA52Hv3JXtpOb+OuYfi4xkVDjNr3R5iL5/I+P8+6tG5m8KCPa66Iwd1Z9Pdb3h0vJJBpt998xjxwu21ufUKoi7ohxIcgFp6Xn65ItNu4qCKH/vcOZtj/1tB0eG0iqIpQ3AA3a6bQpsRvetkg1RFm+zGkNBYVmrzqktjMCqcyi1t8Y4dICo6lItm1SxTqi4Ix+5HEjakuK2KAWRFYs+ONL+2qevZpy0vvXsJebllyIrk1lkob+9xfpv6CA6LtSIFsN2kwUz54RkUs3v+eOK7iyk+lo5aflZe4cwGZ5+7L6lxeqAlx3OsX1c1lIC6b0p1nD4SxWx0d+yqRuyVkyp+NAQFMGvjfzj+5Srnm0hwAL1um0mnmXVXqOzas7XHTBdJgj4DohtF042QEBMGo+KxotluV2nbTqhM+gMRYxd4RZIkWkcFuzl1TVVZMeNxLDkFOIrLUcusqGVWMuN3sfMfn3kcK2nhGhenfgZHuY0TP9c8FtvhouFIHhybITiw1kVD51KamuPu1AEkicR3FrvOGWCi160zuXjFS0z58VliZl3gkwIhRZH584PjMZmVij0Pk1khOMTMLXdVr9F2fSMrMnOuGOBWPGcyKYyZ2JXQMP+8dbZ0hGP3I6MnxHrcpNRUncHDO/rBouqRtX4v9mJ3ASq13MahD5Z4vMaTEwaQFLlGOetnGPjYtRhDAkE+60CVQBORQ7rTfnLNYuuFh1PJSUikLDOPwkOpOMqtZK7Z5dycPR9dJ2PNrhrbW1sGDu3A82/OZfrcvowYE8O8awfz0ruXEN2+8ayEZ17Wn7lXDSQw0IjJpGAyKcRN69loHj4tERGK8SOXXTOYPdvTKMy3YLU6kGUJg0Hm2vkjGvVKx3qqyOuK1JPDB+h960wK9ia5pQbKRoWuV8XV2IaQmLbM3fou2//+CWm/bUUJNNPrthkM+r/rq71aLjqWzurLnqLoWBq6XUV3qMhmI5Ii03H6KK8pl2cKmBqKqOgQrrqpdno4DYEkScy+fADTL+lHSZGF4FCz3zd1WzrCsfuRkFAzz70xh01rk9izI43wiEAmX9yzUTb41TWNjDW7KEnOJLB9pFcZ3jYjPW8Y9rj5YpIWrSN7434cJeWnV+pGBj1+DRF9a1dWHdqtA3FfP4Gj3MqJxRuxZBdQcCCZNsOqkfOvw5Lx92HJLnBJmTwjY5C2PMFjKqMSaKb3nbPPnq+qHP5oKYnvLsZeXEbnOWMZ+Pi1BLVrfL/D+sZgkH26UVpUaGH/rgxkRWLQsA4EBlWu/SM4i3DsfsZsNhA3rSdx0xqm6UVtKDmRxbLJD2HJLQBVBwlMESHOUv5z0vyUIDOj/v1nj2PIRgPTlr5A2optnPjJqanS/cZptB5StyKNnC0H+W36Y86KT7vzrSd6wiCm/PxPrzox1rwiCg6mYMnK9zquWmZ15tAbDUiAarOjmIy0HdPfRVo4/up/cnJ5QkWGTuK7izm+8Hcu2fVhi3TuVWGzqWRnFBEWHlBpdeqynw+w6Iudzq5TkjM8edt9Yxk9PrbhjG3CCMfewigvt7Nnu1OTZsDg9tW6ZvVlT1F6IuusABZOTfTIwd2x5hVjySmgzcjeDF9wK1EjvWe3SLJMp+mj6DR9VJ3vA5zOdsWs/8NeeLaphAZkrtvDnhe+8ioytmruE6jzqt5g1ewOrjjyP9JX78CSW0T0+AFEje5bEerJ2Zro4tTPXGPLL2HvSwsZ/erddbvBZoSu6yz5YT+Lv9uLJIHq0Og7sB13PjCekFDXLKbDB7L54atdbk1HPnxzI7HdWzeq/YXGinDsLYgz5emyLKHrzlXQrGsi0HXda1y68MhJChNTXZw6OEMWebuPcUPBYo/pjfWNrusc/mAJmodWe2q5lcT3fvHo2AsOpnBq51GCLxtQ5RxKoJnADq3pect0j59nrNqBZnMXbdPsDlIXbxSO/RzWrjzKz9/ucSlkOrAnk1ef+52n/uVaq7BySaJH4SxN01m36ihX3ji03u1t6oismBZCQX457/x7PTariqXcgdXiwG5XKSq0sHPrSa/XWXMLkb1thOm6R52U+qbwUCo/9LmZhEfe8zq/t03c4qTMamXhGIICGPjoNciK901AQ2gQstHzWMYwUZRzLuc7dXBWraYm53Mi2TUkVpBXhqcGsapDc34mqBLh2FsIm9cnoXvQVdF1Z7Ntb7Qa2A3Ni+ZJYPtITBG1a8BRW1SbnWVxD1B0NN1Vr/08osd5DrVE9OtS6XVKgAlDcAD9H7qiyjZ9Xa+ciCcPZAgOoM9dl1R6bU3RdZ3UpVv4/YqnWTHzcY589htqJV2fGorkY6f44I0NvPD3Ffy4cLdXWef8PM/HFUUmK73I5diAoR0wepCXNgcY6FfN8GFLR4RiWghFBRaP1YFnPvOGMSSQwU/cwJ4FX7r0GVUCzYx+7S8+79RTkHiC0pQsIvrHEtzJXeL35JItzlW6F/EvJAlDkJmRL93h8ePQ2HZ0vHgkBbKr3XKgiVGv3kWHC4cR3CkKQ2DV1auB0ZGM/+RR/pj/EiCh2R0oJgOdZoyi53zP4ZvaoOs6G277N0nfxld0lMpav5fEd35mxtrXq+wUVV/8sfoon32QgN2moutw7HAOK5ck8o+XZ7pVnLaJCiYnq8RtDNWhuYlhTZnem1VLD6EWWSu0chSDTKvIIEaNq5/GFM0N4dhbCH0GRLNq6SGsFteYsCQ5i2AqY/D/XUdIl2h2L/iCspO5hPfrzPB/zqfD1OE+s89yqpDVlzzJqZ1HkU0GVIuNLpeNZ8Knj7lkt5ScyPK+UpUkOl86jmHP/olW/WO9zjXp6yf47dufKA0woes6xtAghi+YT+/bZ3u9xhvdrp5M+8lDSF60DntRGR2mDa9eumUNyE1IJOmbeJcHq6PUQv7+ZI58soy+d/v27aA6WMrtfPZBgkt4xW7XcDhsfPnRVh544kKX8y+7djCfvrvZtT+rUaZ7rzZ0jHFtyh0SZuaZV2ax6H872ZGQiixLjJkYy7zrhor8+GoiHHsLYcCQDnSMCSc1Ob9i5S5JzuKS6Zf0q/L67tdNoft1U6o8r7asueIZcrceQrM7KlIoT/y8kW2Pf+iyCdl6SA9ko8FjOKXDRcOZ8v0zVc5lCDAR3Lkt0/N/xl5Uhrl1mNduTtUhsG2renWuyd+vw+GhH6taZuXYFyv94tgP7c9GUWTANUyn67B3Z7rb+ePiumEpt7Poi104HE4Z4mGjYph/zxiP40e2DuKO+8fVh+ktAuHYWwiyLPH4c9NY/O1e1q8+it2uMnh4JzrE2Ny0YBqa4qQMchIOotnd+5ke/mAJI1+6s6JjUfTEQYT36kT+vmS0c1buSqCZYc/cUqN5FbMJJaphwxi6ruMos2AICqh2GEtSZOdT2EP4SVL8s00mK95tl2TPn02Z0Zu4aT3JP1VGcIhJFBzVI8KxtyDMZgNX3jjUJV0sPj7efwadpiwtF9lk9CgSptkdOErLMYU7N2klSWL66n+z6d63SP5uLbpDJaxnJy54616iRvf1mU35+5JI+20rhqAAuswbT2B03YqNdF1n/xvfs2fBl9gKSzGGBjLosWsZ8MjVVTr4rlfFceCtH91kig3BAfT8k+dmIrquc+RgDmmpBbRtF0rfge0qWu35gt79oz1uc8iKxLDRMV6vUxSZNm0bdsO9JSIcu8DvVJapYo4MxRgW7HLMFB7CpM//jwmfPIpms2MI8p12y5mNyuML16A7VCSDQsLD7zL2vQfoceO0Wo+758WvXTagbfkl7Hr2f9hKyhj+7PxKr209tCd97ppL4ruLnQ8/XccQHEDU6L70uPEit/NLiq3866mVZGUUo+s6siQR1iqQ/3uu9vafj8mkcNeD43n75XVomo7DoWEOMBAUbOL6W0f6bB5B7RCOXVDv5O05xqEPllCelU+n6SPpdt0Ul6wTc2QYvW6byeFPlrmsSpUgM8Oev9XrilY2KBUhGl+RtHANSd/Gn5VKOB0e2njna7SLG0JITNsaj6labex54SuXzU9wdpTa/+oiBj9+XZUPp1Ev/5kul03g6Oe/4Si10PXKODrNGu0xz/6jtzaSllroovVvyyrhzRfiiZvtu7DbkJGdeP6tuaxddYTcrBL6DGzHmAmxLb7PaWNAOPZ6RFM1UlOcDSFiYlv59FW4qZD4/i8kPPgums2OrmqkLU9gz78WMmfL25hbnU2JG/Xa3QS0bcX+V7/DVlhKUMc2DF8wv06r5Npw8O2fKlIKz0XXdJIWrmHgI1fXeMyytFyv6ZmSIlOSnElEv9gqx4ke25/osf0rPae83M7eHeluDVw0TSfp6Cn6ZapYrQ43/fTaEhUdwhXXi0rQxoZw7PXE/t0ZvPfqH1itDiScxRV3PTSBvgPb+du0BsOSW0jCA++gWly7JpWeyGbXs58z+rW/VByXFYUhT9zA4L9f75TP9VLRWd/YztGdORfNZsdW6J6HXR0CoiLcJBnOoNtVAn0oFmYpt3vdvAQoL7Pz4RsbuefRiT6bMy+3lH27MjAaFQaP6EhQsNgU9Tei8rQeyM4s5vXn11BUaMFqcWCxOCgssPDac2vIza6dc6gJmqqRl1uKpdzudtxTc+T64uTSLUieGonY7Bz/+neP10iSREHiCRIeeY8Nf36V1CWb0TXPTrE+6Dx3LLLZPZRgCA6odd6+MTTI2bT7vLCRbDYSM2cM5siwWo3rifCIQIKCvIdCdB12bU2l0EuFaE357n87efSun/jiwwQ+fXczf/3TIrZuTPHJ2ILaI1bs9cDqZYc89jJVVY3flx+u16YJ8SuO8N3/dmCzqmi6zogLOjNtTh8WfrqDIwezkWWJoaNiuPGOUfXeKV3XNI+aH84PPR/e86+v2fXs/ypCN8e/Wk2bEX2YtvxFrzK8vqT/A1dw5NPlWHOLKtIvlSAzbccNoN2kwbUas+hYOmkrtqGf91BtPaQH4z95pM42n4ssS1x360g+fHODizLiuRiMCrnZJYRXIptbHXZtO8nKXxPdKprff30D3Xu1IbJNsJcrBfWNWLHXA+knC1FVd8/lcGiknyyst3k3rUviy4+3UlJsw2ZTcdg1tm5K4bnHf+PwgWx0HVRVZ0dCKs8+ssyjgp4v6TRjlMcQhGw0uDSEPkPhkZPseuZz1PKzDbIdJRZyEg5y+KOl9WrrGQLahHPJjvfpe8+lBHeJJrxvF0Y8fxsX/bKg1vIJG+54BVtBCZz35lF0LB2lHuQA+g2MJriScIjDR02mV/yaiNXqrm6p6zob4pPqPL6g9gjHXg9079nGo4iR0aTQrWebepv3h692uSnoqQ7dLfyiqTolJVa2bqjfV+bA6EiGv3AbSpC5ojepIcgphTv0H+6SuimL1nl8EKhlVg5/3DCOHZx2j3rlLq5K+op5+z+h333zkI0GdF2n9GQOpWk51R7LYbGRtX4veAiBqVYbp7Yf8aXpAHzx8TZKij2rXkoSjB4f65PWi8WFnjWGHHaN4kLfhHoEtUOEYuqBydN7sXzxQeyOc0IREhiNcr12SsrN9rzx5wmrxcHRwzl08dzJzmf0/+vltB3Tn8T3FlOemU+nGSPpect0jKHuaXeq3eE1nn5+VWpDk5OQyLqbXqD0RDYAod3aM+Hzx6vWhdF17xkxkuTz/QNd19m26YTHN0aAwCAj19/omybTg4Z1ID21EMd5YUdzgIF+g4QKoz8RK/Z6IDwikCdenE63Hq1RFBlFkejesw1PvDidsPD6a4QcEVn9mKnRpBB1TgXg8SO5fPPZdr75bDvHj+T61K6oUX2Y8MmjTFv6Av3unefRqQPEzL7A48alEmCi27UXeriiYShJzWb51IcpOnwS1WJDtdgoOJDC8skPUZ6VV+m1hkAzoT06evxMUhTajPD9k1XzkoETEGggNDwAg4+EtKbN6UtgkNEljddoVOjQKYxBwyoXlhPUL2LFXk906hzB0y/PpLzMmerXELoYc68cyFefbHMJxzi7Jelui0ZZlhg/uRs7duXw2Xub+WPNceynY+6rlh5iwpQe3Hj7SJ/L8lZGm2G96H7dFI5//XtFLrkSZCYkpi397rmsXuYsPHKS8sw8Wg3o6pJXfy6J7/zstVPSoQ+WMOTJG72Ov/+N7yk9keV2XDIqTPzssToVWOm6Ts7mAxQeSiWsVwxtx/RDkiT69I/m4D73OTVNJyDAd3/y4RGBPPPKLH74ehc7E05iNCqMn9KduVcMQPaTho3AiXDs9UxDCh3FTetJcaGFX7/fjyQ7ta579WvL0FExfPe/nRVO3mhUuOexSYRFBGIpt7NhTbrLw8BmVflj9VFGjunc4Hn3Y99/kJjZYzj0/i/Yi8uJvXIiPefPwBjs2wweza7yy+i7yd+XjGxyqkX2uedSRv7rDpeHma2whJQf/3ARHDuDarFxaudRr3M4LDZ2PPlfjxo4pvAQOs0cXWv7LacK+e2iRyg6kuY8IEmEdmvP9JUvc8Mdo/jnY8ux2xzOkIzklAC4bv4IJMldebEutI4K5vb7hApjY0M49maEJEnMvWoQF1/Sj6x0Zyf4iNPKjZOm9uBIYg5Go0KP3m0qVlTFRVasVvfsGKtVZf3qow3u2CVJovPcsXSeO7be5rCXllOYeIKC7UdA0yrkAw69s5jgTlH0v28e4CywWjziz5SmeQ5NyWYjkYO7eZ2nMPGE1zceR0k55Zl5BHWo3Wb6+ptfpGB/isveQ8GBFNbe8DwX//YSz785h2U/H+DwgSzatA1h+AWd2b8rneDWhbz+/BpmzetPzz41l0cQNA2EY2+GmM0GOnd1rWY0mQ3099BW7EyIRlJVYo7to0PKYWTVQV5UR+x9fFc401hI+m4t6295keBnL3NLP3SUWdj30sIKx777+S8pz8wDLzFrxWSg9x3em3OYW4d53fTVNd1N3Ky6WPOKSF+9021s3aGSuW4PlpwCWkdFcMNtTjGuwwezefkfq3DYNSbNCmLn1pPs35XBLXdfwLg47w8mQdNFBMJaOMEhJsxmhYFbVtHl8B4Cyksx2axEpyUR8u5HlKRm+9tEn1F4OJX1t/zLY2jkDOXZBRX/n7xorcfYOoA5KpyLV/2boPatvY4VEtOWyKE93DTTZZOBmNkXYAypXXjJml/iNTYvGxWsecUuxz59x9m5qCLtVQebTeV/HyTg8NLPVtC0EY69hRMcYqKHsYzw/BwU7ewfuYSObrGy98Wv/Gidbzn0/q9Vpk2GdT+bzSEbPL/QygEmhj5zC1Ej+1Q61rGvV1N8JK0ipVFSZAxBZloN7Mq4Dx+qofVnCekSjWzyYpuiENrt7JtZWamNzPOaRZ9B1yElKd/teH5eGT8t3M07/17Psp/2U1riOSde0HgRoRgBF/U2s0tzX7npdpW037b5waL6oeRENrrD+wpVCTIz/MXb0XWdvS8tpCzjlMfzJCD2svGVzpW6ZDMbbn/FtTmGJBHStT2zt7yDXIdWfLJBYcRLd7Llr/9xkzke/sJtLgJqigetnjPomo7Z7LryP3wwm38/sxpV1XDYNXYmpPLLon089a8ZtOvoDM2VlljZ8kcKhQXl9OgdRf/B7VukcmljRjh2AYFtwlECTW4degDMbcI9XNE0aT95MGnLtuDwcJ+GsCDGvf8gXS4Zx/YnP+HAa9+7N/+QJRSzidGv311lR6UdT3zi9u+pO1RKkjM5tf1wlav9quh960wCWoex8+lPKU7KIKRLO4Y+cwux8ya4nGc2O4uF9u/OcKtADosIoGPns42kNU3nnZfXuzQ8t9lU7HaVj97ayBMvTidxXxavPvc7uq5js6oEBBiI7hDG3xZMIyBQ6LA3FoRjF9Bh+gjUv7qn8xmCA+h3eiOxOdDjxmnseeFr1HPj5rKMOTKUeQf/S0DrcGfzi9cWeXzISYrM7M3/IXKg+4ZjRvwuEt/5GcupIjrPHUvh4ZOejZAkCg6k1NmxA3S5dDxdLq38zQHg1nvH8Oyjyygtce4tmMwKBoPMfY9PcsnaOXmigLIy9/0HXYfjR05RXFTOG8+vcXH8FouDtNQCvvtiJzfePqrO9yTwDcKxt3R0nVWz/u4sbz/vo9ir4vxa8elrjKFBzEl4h4SH3uWULDllc2dfwKhX7iKgtfPNpCQ502uDaMVkxBjsXjm84+lP2f/qdxVFVTlb3Btzn8u5MfCGoFVkEC+9eynbN58gI/sg1986kNETYgk8b4WtazpeAyoSHNyb7UnyBoddY8Oa48KxNyKEY2/hWPNLKE3NRj8vO0IJMBHRr0uDVp56Q9d1Un78gwOvL6I8p5AOU4cx6LFrnRkgp4oI7d4BxVy9QrCg9q2J++oJ4uPjubx8udvnge0i3f4tKuxQNQKiIlyOFSdnsu/lb1yaiahlViSDgmSQ0c/RUZEUmeBObYgeP7BSG4uOpZPw0Lukr9yGbDDQccYoOs0cTeSgbrQe0qNa93k+RqPCBRO6Eh+fQlycZ72imC4RGE0KFov7Q6lT5wgq+yrY61kpVFAzhGNv4dgLSz22glMtNtKWJTDwoav8YJUr2x77gMR3F1fYWXwsnUPv/4p0etWNDkOfuZkBD1xZ57nMkWHEzB3DiZ83usTYlQATXa+Kc9O5OblkM56WubpDxRge7GyIrchoNgeth/Vk8ndPV/qwLEvP5ZdRdzk7OWk6KjaSv40nedFaFLORiH6xTFv2IgH1sPchKzJ3PjCeN1+Mx+HQ0FQdg0HGYJS59Z4xtIoMxOFp81miRXUGawr4xLFLkjQdeANQgI90XX/RF+MK6h/ZqCAZFPdsEUnyacu22lJyIouDb/2Ieo6TPWOrDhV55juf/C8BbSLoceNFAGSs2cmeF7+m6EgarYf2YPATN9B6aPWUNcd/9Ahry54jfdUOZLMRzWqn04xRjHn3frdzJYOCt6WsuVUIl+3/LwUHUwhoE05I5+gq597/+vfOB9j5MQ9NRy23kbfnOGuufpYZq1+p1r3UlIFDO/Dsq7NY+Wsi6ScL6dazDVNn9q5omjHn8gEs/fFAhQ67LEuYzArXzq9ddylB/VBnxy5JkgK8DVwEnAS2SpK0WNf1A3UdW1D/mNuEIxsNqOc5diXQRJ+7L/HJHHl7j3P442VYcwvoNGM0sVdMrHboJGP1TqfzPD9D5TwcZVZ2/fNzetx4EUc+Xc6me96s2AAtScni5G9bmbp4AR0urLrxsjEkkKmLF1ByIoviY+mE9exEcKcoj+d2njuWhAfecTuuBJjocdM0DIHmqqV9zyFj9U6vRVEAut1BzqYDlJ7M8WpTXWnfMZyb7vSsY3PpNYPp0i2SZT8foCCvnD4Dopl9+QCfNO4Q+A5frNhHAUd1XT8OIEnSQuASQDj2JoASYGL0m/ew5d63nA5U19FVjaFP30z02P51Hv/g2z+x9dEPKlrdnVi8iT3/+prZG97yKt97LobgAKRq5nyXnshGtdrYcv/brlktuo5aZmXTXa8xL/Gzau8bhHSOrnKVHdS+NSNfvtPlHg0hAYR178iAR66u1jwu43Vqw6mdlTffkE0GyrPy682xV8XQUTEMHRXjl7kF1cMXjr0jkHrOzyeB2svWCRqc3rfOpMul4zi5ZAu6qtFxxiiCfBCGKcvMY+sj77tsLDpKyik+ksaeF79m+IJbqxyj08zR1W5GEdIlmhVvQpcAACAASURBVLQV2732Uy1JycKaV1SRAeMr+v7lUtpNGszhT5ZhzS2k08zRdJk3odIerfbiMlJ+2oA1r4h2EwdVhIn63385Gat3eMy1P4PmUAnvIxyrwDuS7qW7S7UHkKQrgOm6rt92+ucbgdG6rt9z3nl3AHcAREdHD1+4cGGd5vU1JSUlhISEVH1iM6M+79uaW0hJao6b2BaAbDLSamDXao1jLyql6FiG8wdvTv7MKlzCYxu6M+dEDumBJEu1u29dx1FuQzbIyNVorK2rmsfUSXtxOcVH004PqYMkYQoLIvS0nIElK5/S9NOKkufdiyTLBLZrRWAlGjWV0VK/59A87n3y5MnbdV0fUdV5vlixpwHnLh86nT7mgq7rHwAfAIwYMUKPi4vzwdS+Iz4+nsZmU0NQn/ed+O5iEp78wWOxT1DHNsSlflPtsSw5BRxfuMapXDisJ0c+X0HasgRkRUFzONA1vVK5AMmg0HHaCCY/eDtQ8/s++PZPbPu/j5BkCc3mIKJ/Fy5c9AwhXdxDNYnvLWbHU59iLypFNhroc9dchi+4FdlowF5azjcdrsRe7NoTVAkyE/vMLRVZSNa8IjLW7CInIZHkResoPZFFYPtIBv/tenpfM6fWaagt9XsOLevefeHYtwI9JUnqitOhXwNc54NxBY2AM116LLmFRI3uS2DbVtW+ttOs0SQ86L6xKJuMxF45qUZ2BERF0O/es12Uulw6HlthCcVJmfxywV+8O3VJwhASQECbCMZ99HCN5jxDys8b2PbYBy7hkbxdx1g66X6uOPYFsqKg6zoZq3ew9fEPyd99rKIpt2ZzcPCdn7HmFTH+o0dI/XWzx0iRWmYl8e2fKxy7OTKM2MsnEnv5REb+645a2S1oudTZseu67pAk6R7gN5zpjp/our6/zpYJ/E7BwRRWzPw/rKeKkGQZzWaj95/nMuqVu6q1YgzpHM2gv9/A3he/xlFuBV1HCTIT2LYVQ564oc72mcJD0B2qM6vHSyZJl3kT6HbtZDrPHVfrNnS7n/vCLeatqxrW/GLSV2yj04zRJDzwDoc+WuLx7UQts3L8q9UMf/42bAUlFU7/fOzFZbWyTyA4H5/kseu6vhRY6ouxBI0DzaGyfOrDlGfmc27D1MMfLiGibxd63z6rWuMMeeIG2k0aROK7i7HkFtJ59hh6/ml6tTJiqkNw57aoHgqszpC2PAHNaqPzJbVv31aSnOnxuG5XKT6eQd6eYxz6cElFJyZPyGYThYdSaR83BLcGtACyRPtqpGIKBNVB6LELPJK+chuOEoubE3KUWtj7cvVj4wDtJgwi7qsnmL7iZfrdN89nTh1wvk1U0jjZUWohY80ukhetq/UcEf26eDwuGRQi+sdyYvFGjz1Rz0Wz2gnpHE147xhir5iEIeis5oykyBhDAhn23Pxa2ygQnItw7AKPlKXloqme49aWbPfmDP5CsztQAisvdnKUWjj6qbsuTHUZ+swtKIFml2OyyUBo13a0mzTYmWdfSWhKNhpoO25AxUbrhP8+ysh//5nwvp0JbBdJ16snM3fbe4T37FRrGwWCcxGOXeCR1iN6e/+smqX51UHXNErTcmodX47oH4sh0F1x8Xzy96dQndTe4uPpbH/iY9bd8iJHP1+Bw2KjfdwQJn35N4JjopDNRmSTkU4zRzP991eQJIku8yYgG73H7zWHSvspQ9E1jf2vL+Lb2OtIeOhdTOHBxH3zJJO++BthPTrW6L4FgsoQImACj7Qe0oPocQPIWr/XpcBICTQz/PmqC4sACg4ks+Mfn5G9YR8BUREMeOgqut8wtWLj9fg3a0i4/21sRaXoqk6nGSMZ//EjmCOr30Q7+bu13uqRXLDkFJDyw3piL5/o9ZykRWtZf/O/0B0qmt1Byvfr2fXs58ze8jZdLh1P50vGYckpwBAcgDH4bL/SiD6dGfDwVex75TtnP9XzHyC6zu7/b+8+A6Oq0gaO/8+9U9MTEgiBQOid0HvvoFJU7AXbupa1r+8quvaua1ldy9pdFSsiRYoCgvTee0lCIJAEQuq0e+/7YSASZkISSDLJ5Pw+wWRy7zMpT86c8jzP/I/jG/aSNntl8QJr5sodzB/7D0bOet479y5JlUSO2KVSDZ/xLG3vGI853O493NO1JaPmvED9vmWXGji+aR8ze99Fyo9LKTpynBOb97PizjdY/dC7AKTPW8Mft7xC0dETaEUudJebQ3NWM3fEQ35H1rqmkbF0M2mzVuDIPgnAns/n8cetr+LMzPF5vs/nO91s//f04v8bms62N39gxV1vsvujORQdO8EfU15GK3IW11L3FDgoSMtk3SMfAiCEwF4/ukRSP63bUzcxduG/CG1S3+/9NaeLlB+X+uya0QqdrH7ovTLjl6SKkCN2qVQmm4Ver91Br9fuwDCMCh2KWf3Quz7lgD0FDna9O5NOD17B+sc/8UlyuttD7t7DHFu2tUTN8uwNe1hw8aO484sQQkFzuuj49yvZ/V//2wtL48z2NnXOWruLE1v2k/aY9/CUKdTGmofeAz99O3W3hwPfLqb/B382n3blFpC9fg/W6HCiOzcv/rrE9WpLWJP6FKQc9b25biDMCoafZYsTm/aV+zVIlSs/18ne3ZmEhllo0TouaHq3ysQulUtFTzoeW+b/KINiVslYuqXU1nGGrpOzPaU4sXuKnMwd8XdcJ/JKPG/bv77z7Ul6DorFTOOLemMYBosmP4Vy95DiPwqeAgcIgSjll/rMfeebnvsfm577EsViwtB0QhJiGTHzWSJbew9fN5k4gKy1u322Pqp2i7eGjZ8mHuaI0HK/DqlyGIbBj19t5JefdmAyKxiGgT3EwgOPD6NJUvkP4dVUcipGqhJnbucrQQisUWGEJfmvmihUhfAzFhJTZyzD8PgePtIKnRil1YQ5+5omFUtkKB3vv5yc7Sk4sk76PulUVUt/8SRe3AfwrglsfvFrNIcLd24hngIHuXvT+WXoA8XTN21uHUdIwxhvA5BTVLuF6E7NsTWI9tk9o9qttL1zfLleR1VyuTSyMwtwl9I9KtisXHKQuT/vwO3WKCp04yjycCK7kJceXxAUXwOZ2KUq0fq2cag2322IitlEw2Fd6fLPGzCFlNxCKFSFkPgYGg5JLn6sMD2rRJONM6l2i+9WRwGKzVtgzNYwBktUKJFtE2k5ZTSa043mdJU6MkcR3m2Np5KvarNgjYmgxfUjWXnfO6y4603fblOGgSffwaG5q4FTfVXXvkeHByYT0igWa70I4od2ZdAXjzB63kuENKqHOTwEU5gd1W6h0ZiedHn8+nN9KauUpul89dFa7rruG/5x9wzuuu5bvvtiA3o5/2jWVrOnb8Xl9E3gHo/GxjWlNCKvReRUjFQlujxxI5mrdpC1Zhe624NiMSNUhZGznkcxm0i6dCCFh7NYP/VjDMNAd7pRTv0hWPPw+3R86EpC4mOI7dkWxWLybT4hBAkjuqOYVA7NWYViMaN7PER3SGLkrOcxhdqYM+QBTu5MJWfrQXJ3p7Pj7en0//AhhMn/j310hyT6vXc/29/+icJDmSSM6I47r4BFk59Gc7pKrRqpuz0UpB4r/r853E7u7kO4cvLxFDo58ut6ZiTfRu8372Lyga/IWLyJoiPZ1OvRBpPdypHf1hPWrCFRbZtUzhe/Ar78cA1LF+7DdUbP0vmzdgAw+frgPQmbc7zI7+Mej86J47W/tINM7FKVMNksjPntNTJX7SBzxXZsDaJpOrF/iSma9ndPos1tF7F0ysukzlyOJ6+Q3LxCdrz9E3s/X8CE9e/TYGAnojskkb1xX4k5dZPdSrenpxDTuQV5BzPI2XqAsKR4ojt6SwGve/wTcrYeKN6qefpk6LJbX6Pve/exOSvVu1iqGwiTimo10/+DB4nr3a5418+JbQeZ2evOc5YKAO87jZgz9vbv+2IB6XNXF4/uT9971b3v0Gh0TxKGd8PjcLHk2uc49Mtqb/u9Uz1RR8x4pkLbPS9EUaGLJb/t82lE7XJqzJ+1gwlXdsZiOb/6OjVd81axbFqf7lO7X1UVmrU8v5LINYmciqnjDMNg4dxd/OfVJUz7bB1Hj+RW2rWFENTv054O919Oi2uG+513L0jLJHXGshK7W3SXB1dOPhue8nY7Gr3gVVrfMhZTqA0UQVzvdoz57VViOrcAIDwpnsSL+xYndYC9n84tsf++OCZVQSCIaptI86uGUa9bK1rfMo4JGz4grne7Es898N3iMksFKFYzUR2TqN+3ffFjO9+d6bdBuKHrHPhmMQCr73+HQ7+s9s7XnyxAK3KStXoni6585pz3q0xZxwpQSynHIBCcPOF/VBsMLr0m2eePltmskJgUTcs2gelMVZnkiL0OyzlRRHrqSZb8koHT4UFVBb/N3sVt9/WnVz//9VEq2+EF6/wexzc8GmmzVgLeHqR9376Xvm/fW+5tl6XNy6MbaA4Xqj2Uwf979JzX0F2eMhdo4wd2ZtgPT5aIyVPgPyHqbg13fhGa08Xez+b7/OHR3R6OLdtKwaFMTKE28vYdJrRJ/QqVSq6ImNhQPKWUOzYMg8iosk/01lZJLerx8FMj+PLDtRzYl43VYmLg8OZccUO38651X5PIxF6HTftkHbZIHafDO3+taQaapvHhW8tJ7t4Iq7XqfzzUEGupRbxMZy2+ntyVxo53fiJ372EaDOxIm9suxhbrv81dkwn92PvZfJ867Yau02hMT47sK7slb9OJA9j+lv9GIacdW77N50BVk0kDyd132Gc7pinESuOxvXCdLCi1vIEwm1h5z79Jn7sGxWJGc7pocklfBn76f6XvNDpPoWEW+gxIYtWylBLTMRaryuCRrbBUw/c/kFq1rc+Tr46r8BmN2kBOxdRh61am+n1cKIIdm/2Xqq0Id0ERqx96ly9jJ/J52DgWjJ9Kzs6S92wyvh+Gn2Jjqt1K6zNKA6f+vJwZ3W5n53szSZ+7mk3PfsmP7aaQu9enWRcA3Z6agjUmvMS2Q1Oojfb3XUZYov/ToWeL7dmGZpOHeKeASqMIUn9aVuKhjvdfhr1+lM+9G43qQVzvdthiI72nef3wFDpIn7f21JbKAnSnm7RZK1ly44vlqnVTUVPu7EPvAU0xm1VsdjNmi8qAoS24akr3Sr9XTRVsSR3kiL1OKzVPGKBfYBIxdJ25wx/ixKZ9xdMih2av4uiSzUzY8AHhzRoCYI0OZ9Dnj7DkhhfB8E6TmMLs1OvWio4PTgZAc7lZcsMLJRYxtSInmtPFirveZPS8l33uH5IQy6QtH7Htremkz1mFrX4U7f42icRx5e+zLoRgwMd/p8nE/iy+6hm/B6J0j4YrJ7/EY9aYCCZs+ICtr/9A6vSlmEJstPnrJbS8cZQ3iQhBt2dvYfUD/ynxbkCxWzDcms9ireZwkfLDUr6MnkDHh64g+dFrvBUlK4HZrHLbPf255uYeZGcVEhsXSkjouatlSjWfTOy1kMulYRjGBU+VJPdsBGT7PK5rOu06xVf4evmpR9n1/ixObD2AJTqcnG0HS851GwaeQiebnv+KAf/984h+0mWDqN+/Iwe+XojjeC4Nh3al4dAuxSOpzBWlTJvoBkcWbkD3aH67I9niouj+zE10f+amCr+W04QQNJ3Qn2ZXDGH/V7/5HGISwn+DDGtMhN975+xMZfWD73J80z5CGsXiysnHmXkSa70IWt9+Mdtf/wGtlHlvd24BW178CsexE/R562/n/Zr8CQ2zEhpmLfuJUq0gE3stknk0j4/fWcmubUcxDGjWsh5T7uxz3kegr7mpB3Nmz8dsUXG7NIQiMJsVrr+tF3a7uewLnCFj6WYWjHsE3a2hu9wIk+q3D6nh0chYvNHn8ZD4GDrcf7n/i9eAt8pdn7yRtJkrcOcVFid3U6iNJhP6ldiNcy6pPy/jt0lPlHirJFSF3m/dTfu7J6G7Pez890+c69yjp9DJ7g/n0PXJG6ttW6RU+8g59lqiqNDFUw//wo6tR9E0A1032Lc7i+cemcfxrILzuma9uFAaNYliwhWd6NA5noFDm/PYC2MYNKJlha5jGAa/X/scngJH8fbAUptLA/b4mApdP65PO79TD0JRig8pVSTWXR/MImfbQb6Km8SC8VM5Xo4iXOHNGjJhwwe0vHEUIY1iieqQRM9X/8qgzx8p930XX/Wsz/yXoemsfvBdPIUOFLOJTg9fWeYiqWI1k7PD//qIJIEcsdcayxbvx+nw3X7ncWvMn72Tq248v8UuRRFccnknLrm8U9lPLsXJHSm4TuSX/US8o9yOD0yu0PVVi5lBXzzCoiufRndrGG4Pqt2KKdRG3//cW6FrrbjrTfZ9voCQpybgzM7l0OxVZCzcyNjfXye2e+tzfm5Y0wYM+PDvFbrfaZlrdvrdVw/e3qlZa3YRPziZzo9eC0Kw5aVppTYf0Z1uQhvX/r3WUtWRib2W2Lc7q5TaFjorlxxg5ZKDCAH9Bjfj4ss6Yg+pOQtgpjA7CDBcHtrfdxlNJla8sXTiRX2YuOlDdr47g7x9R6jfvwOtbxmHNTq83NfITz3K3k/nlUywhoGn0MGav7/H2IX/qnBc5VVWO8HTO2iEECQ/ei2d/n4lK+58k71f/op+RryKxURcn3bFbfYkyR+Z2GuJ+IYRmM0KbrdvBcKc40XF7/Dn/ryD9asO8dS/LqqS4+CFh7M4uSuN8OYJxcklsm0TLFFhfk9bRnduTtenpuApdNJwaBdCKjgNc6aIFgn0evWO8/78Y8u3Icwq+IZJ5sod533d8ojt0ba4hMHZhEklrlfbEo8pZhN93rkHd0ERqT8tKy47ENu9FUO/e6JKY5VqP5nYa4lBI1sye/o28JPYz5y29bh1srMKWLX0IAOHt6i0+2tOF0tufJG0GctRbBZ0p5v4wZ0Z+u0TmMNDGPy/R1lw8aPobg+6y4NiNSMUBWd2LgsvfQJzuJ12d02k65M3opgD82NnjQ5H4H8htrR95ZUlJD6GFteNYN8XC3zqk/R86S9+1xBUi5khXz1GwaFMcranEJbUoLjuuySdi1w8rSWiY0J44PFhREbZsNlM2OxmFNV/knI6PGxaW7mlR1fe+zZpM1egOd3e2iYOF0cWb+L3618AIH5wMhO3fET7ey6l8bjeNJnQDwODwvQsMAzcuYVse+MHlt7ku+e8ujQc3g3F5rvbR7VbaHP7JVV+/4EfP0y3Z27GHOltrGFPqMfQ7/5Z+m6gU0Ibx9FoVA+Z1KVykyP2WqRthwa88fHlpOw/jqbp/DpnFyuXHPA5aKQogvDIyjt+7ilysu+LBd5GzWfQnW7S562h6NgJ7PWjCU+Kp+fLtwPwQ+sb0M96vlbk5OAPS+j+wq3lPv1ZmRSTyqg5LzJv1MMIVfHWcheChoOTSX7suiq/v1AUkh+9luRHr63yexmGQXpqDo4iD02aRQd9eQCpJPndrmUURRSXFTUMg3UrU30WVU0mhSGjWvn79PPiPJ4LpUxhqFYzhYezSxSqMgyj1KP+qtXCiU37qiSxu07mk71hL7bYSKI6JPk9Kh7bvTVXHf6W336ZT/tX7yCub3vqdanY9s7SnD6FaokKK3ULpu72kPrzcnJ2pBLRMoGmkwagWit3oTs9LYc3n19MzvEiFFWg6wZXTenOsDHn3vUjBQ+Z2GuxVm3rM35yZ2Z8sxlxalLN0OHy67rQtPn5L1Kezd4gBtVi8luXXHd5iGiRUOIxIQSW6HCfPqUAhqYRUslb9QzDYOPTn7PlpWkoVjOGWyO0SRytbhqDp9BFTHJzEi/uW5xsVasFS1QYbScOqbT7b37hK7a8PA3d6UZYTHS47zK6/PMGFPXPBF9wKJPZA+7BeSIPT74DU5iN1ff/h3FL3yTijHaA4G2YXZRxnNDE+pjs5T8R6nJ6eP7R+eTnO0vM5X/9yVriGoRV+HXt3ZXJnp2ZRETY6N63ic/Btcyjefz83VZ2bMkgItLG6PHt6NW/aVDWX6lNZGKv5S65vCP9hzRj49p0hICuPRsTFRNSqfdQTCrJj1/Phn9+iqfwzy0lphAbbe4cjznc937t77uMLS99XaIWijCphDdPICa5You6BYcyOb55P6GJccR0au7z8X1fLGDrq9+iOVzFWxlP7kxj7T/+C4Z3YdRWP5qLlr1V4p1F3oEjZCzehDkylMZje1UogZ5p49Ofs/WVb//82jjdbHvtOzyFTnq98tfi5y254QUK07OKT6568orwFDhYdOXTTFj3vvcxh4uVd73J/q8Xek/v6jrt77mU7s/eXK76MOtWpuFxaz4LtC6nxszvt9JvZPneHbjdGq89vZD9u7PweHRMZoUv/ruaBx8fTuv23ndbGem5PPnQHJxOD7pukHk0n4/+vZwDe7PrVBGxmkgm9iAQExta5W+zO9x/OYrFxManv8CVk485zEaHB68g+ZFr/D4/+dFrKEg9yv4vfyseRUe0bsTIWc+XezSnuz0svfllUn5Y+uc12jRm5OwXSmyb3PTCV363Wp5Obu68IjxFLpb/9XWG//g0hmFQkHqM6eNe8jbeUBQQMPynZ2g4pEuFvi6a08XWV78t8QcPvEf/d/5nBl2fuBFzmB3n8VyOLd/u2zBbNzi5M5X8lKOENW3AsltfJWX6HyX22u/493RUm4Wu/7yhzHiyjuXjOruN4Bkfg/K9k5sxbTN7d2UWl/PVTsX9r2cX8u/PJmM2q3zz2XocDneJNR6nU+PX2TsZdUk7YupV7gBDKj+Z2Ouoo0dymf3jNqwRJ3nj+UWMm9iheCTmjxCC9ndPot1dE/EUODCFWM85glRUlQH/fYhuT9/Eic37sSfU8zva9uf0SDp11grS564pMRI/seUAv14ylfFr3i1+ftER30JmZzM8GofmrEJzutj/9UKcJ3J9ToL+Ov4xrkr/1u87kNIUHi793orJRH7KUaI7JOEpcpbaRFuoKu78IoqOneDgD0t8qkh6Chxse+07kh+9tszyCYmnFkodRSWTuxCUOT1nGAZzf97B7B+2kpfrvwa9YRhs2XCYbr0S2b75iN8KoYqqsGNLBv2HlO/7LVU+mdjroAN7s3nhsfm4XRqDLwphw5pDbNt0hOtv7cmgkededBVCYA4r/57vkIb1CGlYvh6ShmGw/I7X2ff5AlCE3wYXhkcjZ0cKOdsPEtU+CYDojs04tnxb2dfXDXSXh22vf49xQy+/zzn441Ja3Ti6XPEC2OpH+Y7CT3HnFTJ3xEO0veMSOv3f1djiIilIy/R5nmo1E9kmkex1u1FPnRE4m+728PuMzSxbcxRN0+k7qBlDRvk2w+jcNYGo6BAyXXlo2p9Z12xRmXhlZw6kbi71tfzw5Ubmzdzh94TzaYYBRQXe+MxWFYfD992BEAKbTaaWQJL72Ougz95bhdPhnRcFwPDOwf7vo7W4nP7fxleHPZ/MZf+Xv3lH6OfoWqSYTRSkZxX/v/tzt6CWY348ql0Tjv6xhRPbUvx+XHe5cWadrFDM5lA7La4f6d066Yfj6Am2vPQNCyf+k77v3o8aYi1RrVINsdLn339DMamENYsvtZ6MW4evp21nz85M9u/J5rsvNvDM/831+X4pqsJjL46ma69EVJOCqgoSGkfwwGPDzjlidxS5mffzuZM6gK4ZtO3oPXE8eERLzKWcbu7UrZHfx6XqIRN7HeN2axzcd9zvxxRFsH9P2dMaVWX7mz/4nys/i+ZwEX3GtE784GSGff8E4S0bedvsqQrizCkLRWAKsdLub5NYOPkp0P2PsBWziQYDKl4Mrc9bd5N02SBUm7nkfU/HW+QkY+lmbHGRjF30LxIv6kNYUjyNRvdk9NyXaH7VMADs9aNpeulAnz9Sis1CesuOOM84dexyaWQcyWXZ4v0+9wuPsPG3/xvM+19fxTtfXMELb08os77+0SN5KKW0KDzNYjUxZFQr6sV5D1hNuKIzSS1isNpMCOFtqWe1mrj3kcFVUs5CKj/5fqmOURSBooCfbnQYuoHFGrhfSEd2bpnPUUOsNL96mE/NmcZje3P52N7e8rcWMxlLNrPlpa/J23+Eet1bkzz1WjY8+ZnPIavTFIuJ+v06EHtWzZbTUqb/wbqpH5G3/zAhCbEkT72WVjePRQiBarUw6PNH6PXaHfzQbgqu4/62eeocW7aNDvddxoifny319Q346O+ssJg58M1ChMmEoel4+vdin913vtrl1Fi59CBDR/tfODebVczm8n0/I6PtpTb4AEhsGsXYiR3oN+TP2vMWq4mpz49m59aj7N5xjIhIG736N5UNO2oAmdjrGFVVSO7RmI1rD6FrJVe+bHYzSS3KNx9eFRoOSebAN4t956wFYIAlKoz2911G8tTST26ermWeMKwrCWd1NsrZeqDUfoBx/TowYuZzfnfs7Pl8HivufLN4eij/YAar7n2HwiPH6XLGiVVbXBQhDev5TeyKxYQtzn/j7RLx2ywM/ORher95l3cfe+M4pn21BTFnl0/JZqDS/hBHRdtp1yme7Zsz8Hj+/PqbLSp9BiZx69/6+f08IQTtOsX7fUeQn+dky4bDAHTuliATfjWSUzF10JQ7ehNTLwTrqQUui9XbyPieRwajlLJzozp0eeJGb2I+cw7abqXRqJ5M8Szg2uMz6HrWoZ/yMnQdS4z/Er+mMDttbh6LavGtI2PoOmsf/sBnzt9T6GDzi1/hLigq8XiH+y7z2yhDCFGhcsWWiFAiWydiCrHRf0hzzGbfX1WrzcSQMha7K+KvDwykZds4LBYVe4gZs1mlY3JDbviL/4Xmc1k0bzf33fIDn767kk/fXcm9N//A4vl7KiVO3c8fOKkkOWKvgyKj7Lz0zgTWrkzl8LEdXHVjB/oMSgr4iCqyVWMuXvUO6x75kCOLNmIOs9PmrxfT6eGrKtS82VPk5PCv69BdHhoO7YI5PIT5Y//Bic0HfJ8svPPvTS8f7Pdajswc3Ln+G14oJpWTO9NKuRgqoAAAIABJREFUNOhodfNYstbuZu9n8xAmFSEEQhGMmPU85tDzqyDZrGU9xoxvx9wZO/B4NHTd+8e4e+9EuvWuvMJgoWEWHnl2FBnpuRzNyCOhcWSFT6sCpOw/zlcfrcXt0jhzf8+XH66heevY82rlaBgG837ewaxTWzGj64Vw6TXJDBpeOeUggo1M7HWUyazSZ2AzFi9OYciQNoEOp1hU2yYMn/70eX9+2qwVLL7mOe+eccO7TbDxxX3IXLndb0mEyLZNGDHjGUw2/7tazBGhGKVM3+guD7b6USUeE0LQ79376PR/V3F0yWYsUWE0Gt3jguvBXHZtV3r1T2LVHwfxeHR69G1Ci9axVXJ0P75RBPGNzr+f6sK5u3B7fBeoPR6NRXN3c+Nfe1f4mmdvxTyRXcgXH6zG5dQYMa7m/PzWFDKxS0Gj4FAmi656xmfaJOXHpf4bXKgKza8c4lOn5Uwmu5Wkywdx8PuzDg6pCrE925RazCw8KZ7wpHPvRKmoxKRoEs+zcXl1OnG8yO96gK7DieP+3/2cS/FWTFfJxV2XU+PHrzYybHSrMnf01DXyqyFVqdx9h1nz8Pv8NvFxtrz6zalKkVVj72fz/B8WKmVO1jAMtFKO35+p58u3e7dRnnVNezkPXtU1Hbsk+F3UtdpMdOqa4Oczzi3jcC5qKYnb5dLIPVn2Ftm6Ro7YpfNiGAb792SRkZ5HfKMImreqV2JaQNcNVr4/j90PvAm6huHWSF+wji0vTeOSVe8Q3rziv+BlKUjP8ntqE/AuyJ41paLaLDSdNKDM6+79YoHvbhrDIG3WCjLX7CSup/8tkhdK1w2W/LqHBbN3UVToplPXhoyf3Ll4H3lNNXB4C375aRsnPUXFp19VVSE83HpeZQaiou24S9uKaUBIaM3p71tTyMReg7mcHlxOjdBwS40qg5qX6+DlJ37l6JE8DMNA1wwsVhMdkuMZPLIVDRqG8/Lj82j9+UeY3X/uG9eKnGhOF8v/+gaj51duJyVd03Bm5/pN4IrVjDncjqfQWTxNIxSF5lcOLbHwWZr9Xy/0u/9dc7hI/Xl5lSX2/765jLVn1Ntf8us+Vi9L5ZnXLyK2fsUXNauL3W7mqVfH8f2XG1mzPBUB9OzflMuu7YLN7rvzqCxRMSG07diAnVuOltyKaVbpMyhJNhHxQ35FaqD8PCef/GclG9d429tFxdi5/rZedOnZOMCReb33rz9IT80pUYvE43GxZnkqm9cdRjEJzGmHEP4WHXWDI4s2oLs9ldb79MTWA8zqdzeefN+35EIRmEJtXLLqHQ7NXcvB7xZjCrVjNG9I//tuKdf11VLiFIqCaq14oiqPQ6k5rF2RWmJeWdcNHEVufpq2mVvv8b+vvKaIiLJz8119ufmuvpVyvTsfHMgbzy3m4L5sVJOCx63TITn+vLZi1gUysdcwhmHw4uMLOHLoZPHoJOtYAe+8soSHnhhOmw4NAhpf7kkHO7cdLZHUz+R0esAJlmraauwpcjJ74L1+kzpAWLOGjJ73EuHNEmh3x3ja3TEegMWLF5f7XVCrW8ZyYvtBn0VZxaTSbPKQC4q/NN7Kif4WIA02b/DfnSqYhYZZmfrCaA4fOklmRj4NG0dQP97/uQRJLp7WOLu2HSMzI6/EW07wLhL9+PWmAEX1p/w8Z6kLWWfKjY7D8NdOTwjiBydX2mg95celfrcxnubMzkUtZStjebW+eSwN+nXEdKqqpbdfqpXkqdcS2aZqGkzb7ZZSd3rY/DTkrisSGkeS3KORTOplkCP2GiYt5URxU4OzHUrNqeZofNVvEIZSjpGuoSjs7DaQ9usWI3QdxTDQVRVbZAh9372v0uLJTzmK7i69xonrZD7ft7qBQZ//g6TLBp3XPRSziVFzXyR9/lpSZyzDFGan5XUjK9wJ6lx03VvnfPf2o0RG20nu3tjviN1iVRku921LZZCJvYaJaxCGalJwu32Te2wN2A1hMqtcfl0Xvvl8faklXlXVe9oyOz6RtYPH0+jATkKK8ugwsTdDnr4WW2zZNVPKKya5BWqIFa20qpCGd9F2yQ0vkjCiG5bI81t0FIpC4zG9aDym8ud0HUVunp86n4zDuTgdHswWle++2MDFl3Zk1g9bEULg8WioJoUOnRvKAzlSmWRir2E6dU0gJNSC06mVOORhsapMuKJzACP704iL2hIeaePHrzdy9LC34NXpwaXVZqJ7n0RatY1jzvTtnLSqeJLHMeaGrnRIbljqNV0uDbfLQ0hoxXYANRrTk7DE+pzcnVbqfnXwTp+kzVxBi+tGlvva1eXHrzeRnpaD59Qf89Pt6Ob8tI2X3p3A5nWHKch30a5TA5q3ig1kqFItIRN7DaOqCo8+N4o3X/ido4dzUU0KumZw+XVdKrUuyIXqPSCJ3gOS0DSd9avSWLnkIKpJYcCw5nTqmoAQgmFjyh5ZFha4+PS9VaxbkYqBd8/y9bf1pGuv8r1WRVUZt/QNVtzxBikzlmGUMi1j6DqeczTvCKQ/Fu0rTupnO7j3OENGVV6hL6lukIm9BoprEM6zb1xMxuFcCvJdJDaNqrF7dVVVoWe/pvTs17TCn2sYBi/9cwGHUnKKF4uzMwv4z2tLeeCxYWU2hzjNVi+Sod8+geZy88ctr3Bg2iI/TaN1Ekb1qHCM1aG0pA6ndhlJUgXJXTE1WHxCBC1ax9bYpH6h9uzM5Eh6ru8OoFM1QCpKtZjp8cJtWKLDUc4owWsKtdH2romVXrvF43CRMv0Pdn/8Cyf3HDrv63To3BB/s0+aRy/3HzdJOlNwZgypVjiUklNqbe30tIr1Hj0ttHEck7Z8yNbXviN97hqssZG0v2cSTSaUvxZ6eRxbsY0FFz2CoRkYuo6h6TS7YggDPv57hUoMA1w5pRs7tmbgcnqKzwdYrSbGTGxPVPT5lfqV6jaZ2KWAia0f6nekChATG3Le17U3iKHny7fT8+Xbz/sa5+IpcjJ/3CO4TxaUePzg90uI7dmGdndNrND14hMieO7NS5gzfSvbNmcQFW1nzPj2NeaksVT7yMQuBUxklM2nFCuAogrGT64ZO4D8SZu5wu8OHE+hg+1v/VjhxA5QLy6U6/9S8Trl52IYBgf2ZuMoctOsVSz286jTItVOMrFLAfP1J+uglB2K59oaWVl0TTuvNnuOrJPoHv+Lmk4//U4DIfXgCd54bhH5eU4URaB5dC69Jhl7VNmfK9V+cvFUCphd2475fdxqNbF7+9EquWfh4Sx+m/RPstfv4TPraH4Z+gA52w9W6BoNBnQEf+USFEH84MC/03A5Pbz42HyyMwtwOjwUFbqLS1IUFZZS1lgKKjKxSwFj8tOgGbyHnU432q5MnkIHM3vfRdqsFd6b6AYZSzYzq9/fKEjPLPd1Yjq38La7CzmjR6wQmEJsdHv6puKHNq49xMtPLGDqvTOZ9uk6cs6je9D5WLcyDc1PazqXUyPnRJGfz5CCjUzsUsD0HdQMk8n3R9BkElVSxXL/1wtx5eSX3ONuGGgON9vfml6haw399gm6PH49oYlxmCNCSbyoNxeveJuo9kkA/PDlBt55ZQnbNmVwKCWHBbN2MvXemWQdy6/EV+Tf8ewCv2sXgN+ELwUfOccuBcyVN3Zn785MsrMKcBR5sFhVhBDc+8jQclWQrKhjy7fh8VNTRne5Obp0S4WupZhUOv/f1XT+v6uLHysqcvP5+6v4Y9E+nI6SidXj0SkscPH9/zby1wfK7tp0IZo2j8FiUXE4Sq4DCEHQnomQSrqg77IQYjLwJNAO6GUYxtrKCEqqG0LDLDz7xsVsWpfO/j3ZRNcLofeApoSGWcv+5PMQ3rwhis2C7jirG5IiCG9xYYu1um7w4mPzOZSaU+pJUl2HTevO/yBTebXv3JC4+DCOHCp5+MtsUYmKkfvi64ILHRZtBS4FllRCLFIdpKgKXXslctm1XRg2pnWVJXWAVjeNQfFzeEi1Wehw3+UXdO0dWzK8p2jPUR4AvNUxq5qiCB55djS9+jfFZFIQApo0i+bvT47AYqn6+0uBd0EjdsMwdgA1qh+nJBUVuVk0dzerl6VgtqgMHd2KPgObEZIQy/Cfn2XxFU8jVAVzRAiGbtDv3fvK1fv0XPbvzsJVRl0Xs1ll4LDKq+F+LqFhFm6/fwC33dsfXdOL/6AcPrq9Wu4vBZaccJOCSlGRmycfnEN2VkFx+duUfdmsX5nGXQ8PImFYV67K+J6Fc+fT58enqd+3PSb7hb9LiIyxY7GacDr8J3ebzUR8owgmXNHpgu9VEYoiUBQ5Sq9rhL8uLSWeIMSvgL9KRFMNw5hx6jmLgYfONccuhPgL8BeABg0adJ82bdr5xlwl8vPzCQuruZ3fq0qwve6TOQ5yjhdy9o+1EN6j+6e3UVb26zYMg7SDvrVvBGAPNRMeYcMeEviTn8H2/a6IYHjtQ4cOXWcYRpllSsscsRuGMaIyAjIM4wPgA4AePXoYQ4YMqYzLVprFixdT02KqDsH2uv/5wGxS9hf4/di4SU258sbuQNW87r07M3n9uUXFC5Yej8Yll3Vk4lXJlXqfCxFs3++KqEuvXU7FSEGltMVBRRVYK3mrX3paDlnHCmjcJIp6caG0bBvHW59ezs6tR3EUuWndvj7hEbZKvWdtdfRIHgt/2cXh9Fxato5l6OhWRETJHTpV5UK3O04C/g3EAbOFEBsNwxhdKZFJ0nkYMroVqQeO4zyrH6uqKvQemFQp98jLdfD6s4tISzmBqip43BpdeyVy+339MZnVaqlzU5ts2XCYt15cjObR0TSDHZszmPvzdh5/cSwJiZXX/1b60wVtdzQMY7phGI0Nw7AahtFAJnWpKh1OO8l3X6zns/dXsWltut9a7v0GNaNDlwTvXLrwjtTNFpVJVyfTsFHlJJG3Xvidg/uycTk1igrduN06G9Yc4tvP11fK9YOJrum89/ofuJxaca15t1ujsNDNx++sCHB0wUtOxUi1wvxZO/j28w1oHh1dN1i2aD9JLWJ4+MkRJfaGK6rCPf8YzK7tx9iw+hBWm0qfgc1IaFw5Sf1YRh4H9mUXJ6nT3C6NRfP3cOWU7lVyara2SjlwAo+/PrQG7NuThdPhxmoL/KJysJGJXarxsjML+PazDbjPSBBOh4cDe7P5be5uRl/SrsTzhRC07dCAtlVQb+ZEdiEmk1K8lfJMmsfA5fRgD7FU+n0lqSLk0EKq8dasSMHwU7jd5dT4fcGeCl3r8KGT/DRtEznHi9i/J6vCsTRKjCr1dGlYhBWbbGZRQtNm0Zj9nLYVAlq2iTuv0bphGOzbncV3/9vA9K83kZ6WUxmhBhU5YpdqPM2jY5TSG/XsRtjnMuPbzcz8fiuapjN4XAgvPDaf3gOSuOXuvuU+PR0WYWXwyJYs+W0vrjMWaC1WlSuu6yJPYZ9FURXueHAgbzy/CF0z8Hh0LBYVk1nlpjv7VPh6hmHw4b+Xs3pZCm6XhhCCOdO3cdGlHWrUttJAkyN2qcZL7t7I77y1yazQZ0BSua6Rsv84s77fituloZ+aH3c5NVYvS2HD6ooV5rr21p5cMrkToWEWEN62djfd2YcBw1tW6Dp1RYfkhjz/1iWMvKQtXXs1ZvwVnXj53Qnnte6xflUaa5an4nJq3pL6uoHLpTH7x22k7D9eBdHXTnLELtV4jZtGM2BYc5YtPlB8ZN9iUYmMtjN6fPtyXWPZ4v0l5uhPczo8LJq/h269E8sdj6IIxl/eifGXd0LTdLlYWg5xDcK56tThsAuxeMFev2Ub3G6NPxbto2nzmAu+RzCQiV2qFW64vTedujVi4S+7KSxw0bNfE4aMalXuhUpHkdunzMBpTsf5t4uTSb1qHc8qYP/ebCIibbRqG1fq98owKLVOT10kE7tUKwgh6NYrkW69yj+yPlP33k1YufSgzy+/xarSu5zTOVL10TWdT95dxfLf92M2qeiGQXiElQFDm3Ngb3aJ9Q3wtlLs3qdJgKKteeRwQ6oTOnVLoGWbWCzWP3domC0qcfXDGFBNpXSl8pv78w5WLj2Ax61TVOTG6fCQnVnA77/upX6DcMxnlI6wWFVatomjU9eEAEZcs8gRu1QnKIrggceHs/S3vfz+614sVjeXXp3MsDGtsVpNFOQ7SUvJITLKVmknVKXzN2/mDp9RuWFAUaGbm+7sy5FDJ1n++wFMJoVBI1syYGgLFEXuSDpNJnapzjCZFIaObs3Q0a1PVfrrgGEYfPP5ehbM3IHJrKJ5dBISI7n30aHE1AsJdMh1Vn6es9SPFea7GDOhPWMmlG/hvC6SUzFSnfbbnF38OnsnbrdOUaEbl0sj9eAJXnnyV8rqVSBVnSZJ0X4f1zSDZq3qVXM0tY9M7FKdNvvHbT5v+XXNIDuz4LxOpkqV48op3X1KMJstKl16NCI+ISJAUdUeMrFLddrJkw6/jwsBWcf8N+yQql7bDg144PFhNG0eg6IIQsMsjJvYnr8+MDDQodUKco5dqjOOZxficnio3zC8+LH4hAjSU31rjWiaQWIp0wFS9WjXKZ6n/3VRoMOolWRil4Le0SN5/OfVJaSn5qAoCla7ibGTvTtfrri+K++8uqTEdIzZrNC2Y4NKK/Xrj8vpYc3yVI5m5JHQOILufZr4LZYlSedDJnYpqLlcGs/+Yy55uY5TJ091nE4PmUdV9u3OpEvPxtx2b3+mfbyOnBOFKKrCwGHNufqmMvsFn7eM9FyefWQubpeGw+HBZjMx7ZN1PP7SWOrFhVbZfaW6QyZ2KaitXZGCy+nxKSdgGDDz+63c9+hQevVrSs++TXA4PFgsapWXCXj7lSXk5zmLY3I4PLhcGu+/8QePPiebkEkXTi6eSkEt43AejlJqiBxOO1n8byEEdru5ypN65tF8Mg7n+vyh0XWDfbuyzrl/W5LKSyZ2Kag1bBSBzeb/jWmjJlHVHA04nZ5ST0gKReByykJW0oWTiV0Kat37NMFmNyPOSqZCwPjJnao9noRGEaUukkZE2oiWp12lSiATuxTULBaVx14cQ4vWsZjMChaLSnSMnfrx4TRrWf0nGBVV4cY7enuLkZ36WyOEN86b7uwjOzBJlUIunkpBL65BGI+/OIbcnCKcTo3Y+qH8/vvvAYunV7+mxMSEMPOHrRw5dJLEpGguubwjSS3kUXmpcsjEHqQO7svmyw/XsHdXFmaLSv8hzbnyxm51utlyRJQ90CEUa9k2jvunDg10GFKQklMxQSg9LYfnp85n945MdN3A6fCw5Le9vPj4fFnYSpLqAJnYg9CMbzbjcpUsbOVx6xw+lMuOLRkBikqSpOoiE3sQ2rszC0P3HZm7XRr792QHICJJkqqTTOxBKDLG/1yy2aISVcrHJEkKHjKxB6GLJnXAavXdK60ogp59ZcNfSQp2MrEHoR59mzB2UgfMZhWb3YzNbiIi0sbDT43Aaqu7u2Ikqa6Q2x2D1KSrkhk5ri27dx7DbjfTpn19lCqugyJJ1UXTdHZuPUpRoZtW7eKIrEFbWWsCmdiDWFiElW69EgMdhiRVqgN7s3ntmd9wu3QE4PFojLy4LVfc0E2e3D1FDuEkSao1XE4PLz/xK3knnTiK3BQVuXG7dX6ds5sVSw4EOrwaQ47YK4GuG6xbmcqi+XtwOT30HpDEoBEtsVrll1eSKtP61Wnouu7zuMvpYc707fQb3DwAUdU8MvNcIMMw+OCNP1i/+hDOU3W/U/YfZ/G8Pfzz5TFysVKSKlHOiSI8bt/EDpCbU1TN0dRccirmAu3blcX6VX8mdQCXU+NYRh6LF+wNYGSSFHxatI712wxFCG/9HclLJvYLtG5VKk4/zRFcLo0Vv8s5P0mqTC3bxNG0RQxmc8nUZbGYuPTq5ABFVfPIxH6BTGa11I44JpP88gajvFwHu3ccIzuzINCh1DlCCP7+xHCGj21DSKgZVRW0aV+fR54bReOm0YEOr8aQc+wXqPeAJH75aTv6WUW3rFaVIaNaBSgqqSpoms4X769m6aJ9mM0qHrdOmw71uevvgwgJtQQ6vDrDYjVx9c09uPrmHoEOpcaSQ8oL1LhJFBdf2gGLRUU59dW02ky07RhP38HNAhucVKl+/Gojy37fj8etU1Toxu3W2Ln1KG+/HLimHdXB5fSQm1OE7qewnFQzyRF7JZh4VTJdeyWy4vf9OJ0a3fsk0r5zw1KnaKTaR9d0fp2zC5fzrHLIHp3d2zPJOpZPbP2wAEVXNYoKXXzy7irWrUwFICzMytU396DPwKTABiaVSSb2StK0eQxNm8cEOgypijgcHtwu/9vsTGaF7MyCoEvsrz69kIP7sou3F+acKOKjt5djDzGT3L1RgKOTzqXOTMUYhoHT6UHX/P9yStK52OxmQkL9n0nwuDUaNoqo5oiq1oG92aQeOO6zZ9zl1Pjhyw0BikoqrzoxYl+7IoWvPl7HiexCTGaFwSNaceWUbpjNvqVtJckfRRFMujqZaZ+uKzEdY7Go9B6YVKP6qVaG9NScUuuuZKTnVXM0UkUFfWLfsDqN919fVtwqzuXUWLxgD1mZ+dz3qGwmLJXfsDGtMQyY/vUmHEVuFFUwbGwbJl/XNdChVbq4BmGUtkIUXS+kWmORKi7oE/u3X2zw6f/pdmls3XCEjMO5xCcE11toqeoIIRgxrg3DxrSmqNCFzW72ewoyGLRuX5+oeiEcO5JXYjeMxaoy/opOAYxMKo/g/Kk8Q0Z6rt/HVZNC2sET1RyNFAwURRAaZg3apA7eP2L/eGYkSS3rYbao2O1mLFaVCVd0pv8QWWirpgv6EXtEpI2cE77FgQzDICY2NAARSVLtEB0TwhMvjyXzaD55uQ4aJUbKona1RPAOOU4ZO7E9lrP6fyqqoF5cKM1b1QtQVJJUe8Q1CKN5q1iZ1GuRoB+xj7qkHdlZBSyauweTWUHTdBo2iuS+qUNltxVJkoJS0Cd2RRFce0tPJkzuTFrKCSKj7CQkRgY6LEmSpCoT9In9tLAIK+06xQc6DEmSpCoX9HPskiRJdU2dGbFLUm2Vn+dkzfIUCgvctOvUgOatYgMdklTDycQuSTXYxrWHeOflJSBA8+ioJoX2nRtyzz8GB/U+eunCyJ8MSaqhCvJdvPPKElwuDZdTQ9MMXE6N7ZuPsGDWzkCHJ9VgMrFLUg21flWa3y25LqfGwrm7AxCRVFvIxC5JNZSjyF1qmWmHw13N0Ui1yQUldiHEK0KInUKIzUKI6UKIqMoKTJLqunad4/2O2BVF0LmrbHQhle5CR+wLgI6GYXQGdgOPXHhIkiSBt59uz35NSpTEUFSBzW5m4lWdAxiZVNNd0K4YwzDmn/HflcDlFxaOJElnuvWe/rTp0IAFs3dRWOCiU9cExk/uRL04WcBOKl1lbne8GfimEq8nSSXk5hTxzefrWbs8FQPo1iuRK6d0IzomeBs/KIpg8MhWDB7ZKtChSLWIMAzj3E8Q4lfA31n8qYZhzDj1nKlAD+BSo5QLCiH+AvwFoEGDBt2nTZt2IXFXuvz8fMLCgqsZcXnUltdtGAbpqSfRPDpn/oCpqqBRkygUpWIF3WrL665sdfV1Q3C89qFDh64zDKNHWc8rM7GXeQEhpgC3A8MNwygsz+f06NHDWLt27QXdt7ItXryYIUOGBDqMaldbXvdvv+xi2qfrcTk9JR63WFUuu6YLYya0r9D1asvrrmx19XVDcLx2IUS5EvuF7ooZAzwMjC9vUpek87Ft0xGfpA7ePd1bNx4JQESSVHNd6K6Yt4FwYIEQYqMQ4r1KiEmSfMTEhvqdblEUQUxs8M6xS9L5uNBdMS0rKxBJOpeho1vx+/w9Po3JTSaF4WPbBCgqSaqZ5MlTqVZolBjFlDv7YLGo2OxmbHYTZovK9bf1pGnzmECHJ0k1iqzuKNUa/Yc0p1vvRLZtOoKhG3RIbkhIqCXQYUlSjSMTu1Sr2O1mevRpEugwJKlGk1MxkiRJQUYmdkmSpCAjE7skSVKQkYldkiQpyMjELkmSFGRkYpckSQoyMrFLkiQFGZnYJUmSgoxM7JIkSUFGJnZJkqQgI0sKSFIVKSpys3NLBkIRtOsUj9Uqf92k6iF/0iSpCvzx214+e381qqpgCDB0g1vv6Uevfk0DHZpUB8ipGEmqZCn7j/PZ+6txuTSKitw4Ct04HR7++8YyMg7nBjo8qQ6QiV2SKtmvc3bh8eg+j2uazuJ5uwMQkVTXyMQuSZUsO7MAXfdtEq9pBlmZBQGISKprZGKXpErWvnMDzBbV53GL1US7TvEBiEiqa2Ril6RKNmRUa2x2E+KM3tuKKggNNdN/SPPABSbVGTKxS1IlCwu38tSrF9GjbxPMZhWLRaVX/6Y8+dpF2OzmQIcn1QFyu6MkVYF6caHc/fDgQIch1VFyxC5JkhRkZGKXJEkKMjKxS5IkBRmZ2CVJkoKMTOySJElBRiZ2SZKkICMTuyRJUpCRiV2SJCnIyMQuSZIUZGRilyRJCjIysUuSJAUZYRi+daOr/KZCZAIp1X7jc4sFsgIdRADI11231NXXDcHx2psahhFX1pMCkthrIiHEWsMwegQ6juomX3fdUldfN9St1y6nYiRJkoKMTOySJElBRib2P30Q6AACRL7uuqWuvm6oQ69dzrFLkiQFGTlilyRJCjIysZ8ihHhFCLFTCLFZCDFdCBEV6JiqixBishBimxBCF0IE/a4BIcQYIcQuIcReIcQ/Ah1PdRBCfCyEOCaE2BroWKqTECJRCLFICLH91M/4vYGOqTrIxP6nBUBHwzA6A7uBRwIcT3XaClwKLAl0IFVNCKEC7wBjgfbA1UKI9oGNqlp8CowJdBAB4AEeNAyjPdAHuKsufL9lYj/FMIz5hmF4Tv13JdA4kPFUJ8MwdhiGsSvQcVSTXsBewzD2G4bhAqYBEwIcU5UzDGMJcDzQcVQ3wzCOGIax/tS/84AdQKPARlX1ZGL372bgl0AHIVWJRkDaGf8/RB34RZdACJEKsY0TAAABPUlEQVQEdAVWBTaSqmcKdADVSQjxKxDv50NTDcOYceo5U/G+ffuyOmOrauV57ZIUrIQQYcAPwH2GYeQGOp6qVqcSu2EYI871cSHEFOBiYLgRZPtAy3rtdUg6kHjG/xufekwKUkIIM96k/qVhGD8GOp7qIKdiThFCjAEeBsYbhlEY6HikKrMGaCWEaCaEsABXAT8HOCapigghBPARsMMwjH8FOp7qIhP7n94GwoEFQoiNQoj3Ah1QdRFCTBJCHAL6ArOFEPMCHVNVObVAfjcwD+9C2reGYWwLbFRVTwjxNbACaCOEOCSEuCXQMVWT/sD1wLBTv9cbhRDjAh1UVZMnTyVJkoKMHLFLkiQFGZnYJUmSgoxM7JIkSUFGJnZJkqQgIxO7JElSkJGJXZIkKcjIxC5JkhRkZGKXJEkKMv8PzLahwCW/L8IAAAAASUVORK5CYII=\n",

"text/plain": [

""

]

},

"metadata": {

"needs_background": "light"

},

"output_type": "display_data"

}

],

"source": [

"# Number of samples in dataset\n",

"m = 200\n",

"\n",

"# Create data and labels\n",

"gaussian_quantiles = sklearn.datasets.make_gaussian_quantiles(mean=None, cov=0.7, n_samples=m, n_features=2, n_classes=2, shuffle=True, random_state=None)\n",

"X, Y = gaussian_quantiles # data and labels\n",

"X, Y = X.T, Y.reshape(1, m)\n",

"\n",

"# Visualize the dataset\n",

"plt.figure(figsize=(6,6))\n",

"plt.scatter(X[0,:], X[1,:], c=Y[0,:], s=40, cmap=plt.cm.Spectral)\n",

"plt.grid()\n",

"plt.show()\n"

]

},

{

"cell_type": "code",

"execution_count": 4,

"metadata": {},

"outputs": [

{

"name": "stdout",

"output_type": "stream",

"text": [

"The shape of X is: (2, 200)\n",

"The shape of Y is: (1, 200)\n",

"Number of samples is: 200\n"

]

}

],

"source": [

"# Show shape of data and labels\n",

"shape_X = X.shape\n",

"shape_Y = Y.shape\n",

"\n",

"print('The shape of X is: ' + str(shape_X))\n",

"print('The shape of Y is: ' + str(shape_Y))\n",

"print('Number of samples is: %d' % (m))\n"

]

},

{

"cell_type": "markdown",

"metadata": {},

"source": [

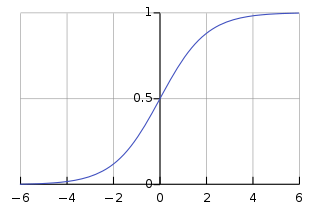

"## Logistic Regression (Simple Model)##\n",

"\n",

"Before training a neural network for this problem, let's investigate how well logistic regression performs.\n",

"\n",

"**Sigmoid Function:** Useful function for binary classification problems.\n",

"\n",

"$$ \\large \\sigma(x) = \\frac{1}{1 + \\exp(-x)} \\ \\in \\ [0,1]$$\n",

"\n",

"\n",

"\n",

"\n",

"\n",

"**Set up:** Given data samples $\\large \\{ x^i \\}_{i=1}^m$ where $ \\large x^i \\in \\mathbb{R}^{2}$ and labels $ \\large \\{ y^i \\}_{i=1}^m$ where $\\large y^i \\in \\{0,\\ 1\\}$. We want to learn a function such that \n",

"\n",

"$$\\large f(X) = Y, $$\n",

"\n",

"where $\\large X \\in \\mathbb{R}^{2 x m}$, and $\\large Y \\in \\mathbb{R}^{1 x m}$. \n",

"\n",

"**Logistic Regression:** Function $\\large f$ takes the linear form\n",

"\n",

"$$\\large Z = f(X; W, b) = \\sigma(W X + b 1_m), $$\n",

"\n",

"where $\\large W \\in \\mathbb{R}^{1 x 2}$, $\\large b\\in \\mathbb{R}^{1x1}$, $\\large 1_m \\in \\mathbb{R}^{1 x m}$ (vector of 1s), and $\\large Z \\in \\mathbb{R}^{1 x m}$ are the predicted labels.\n",

"\n",

"**Entropy:** Entropy is the average rate at which information is processed.\n",

"\n",

"$$\\large H(X) = - \\sum_{x} p(x) \\log(p(x)) = E_X(-\\log(p(x)) $$\n",

"\n",

"The term $\\large -\\log(p(x)$ represents information, i.e., low-probability events present higher information than high-probability events.\n",

"\n",

"\n",

"\n",

"Since this is a supervised problem, each label generates a Bernoulli random variable with all its mass located at $\\large 0$ or $\\large 1$. Thus\n",

"\n",

"$$ \\large H(y^i) = 0, \\ \\forall y^i. $$\n",

"\n",

"**Cross Entropy:** Cross entropy can be interpreted as the average information needed to encode events drawn from true distribution $\\large Y$, if using an estimated distribution $\\large Y'$, i.e.,\n",

"\n",

"$$ \\large H(Y ; Y') = - \\sum_{y} p_Y(y) \\log (p_{Y'}(y)) $$\n",

"\n",

"**Kullback-Leibler Divergence:** The Kullback-Leibler divergence measures how one distribution differs from another and it's related to the cross entropy, i.e., \n",

"\n",

"$$\\large K(Y || Y') = H(Y ; Y') - H(Y).$$\n",

"\n",

"This implies that the Kullback Leibler divergence is equivalent to the cross entropy for each sample of our dataset (since $\\large H(Y) = 0$). Moreover it's easy to show (using the definition of the Kullback Lebler divergence) that \n",

"\n",

"$$ \\large H(Y ; Y') \\geq H(Y).$$ \n",

"\n",

"Therefore, minimizing the cross entropy is equivalent to minimizing the Kullback-Leibler divergence with a global minimum at $\\large 0$.\n",

"\n",

"**Cost Function:** Since this is a binary classification problem, we want to minimize the cross function, i.e., \n",

"\n",

"$$ \\large J(W, b) = - \\frac{1}{m} \\sum_{i=0}^m [y^i \\log(z^i) + ( 1 - y^i) \\log(1 - z^i)] $$\n",

"\n",

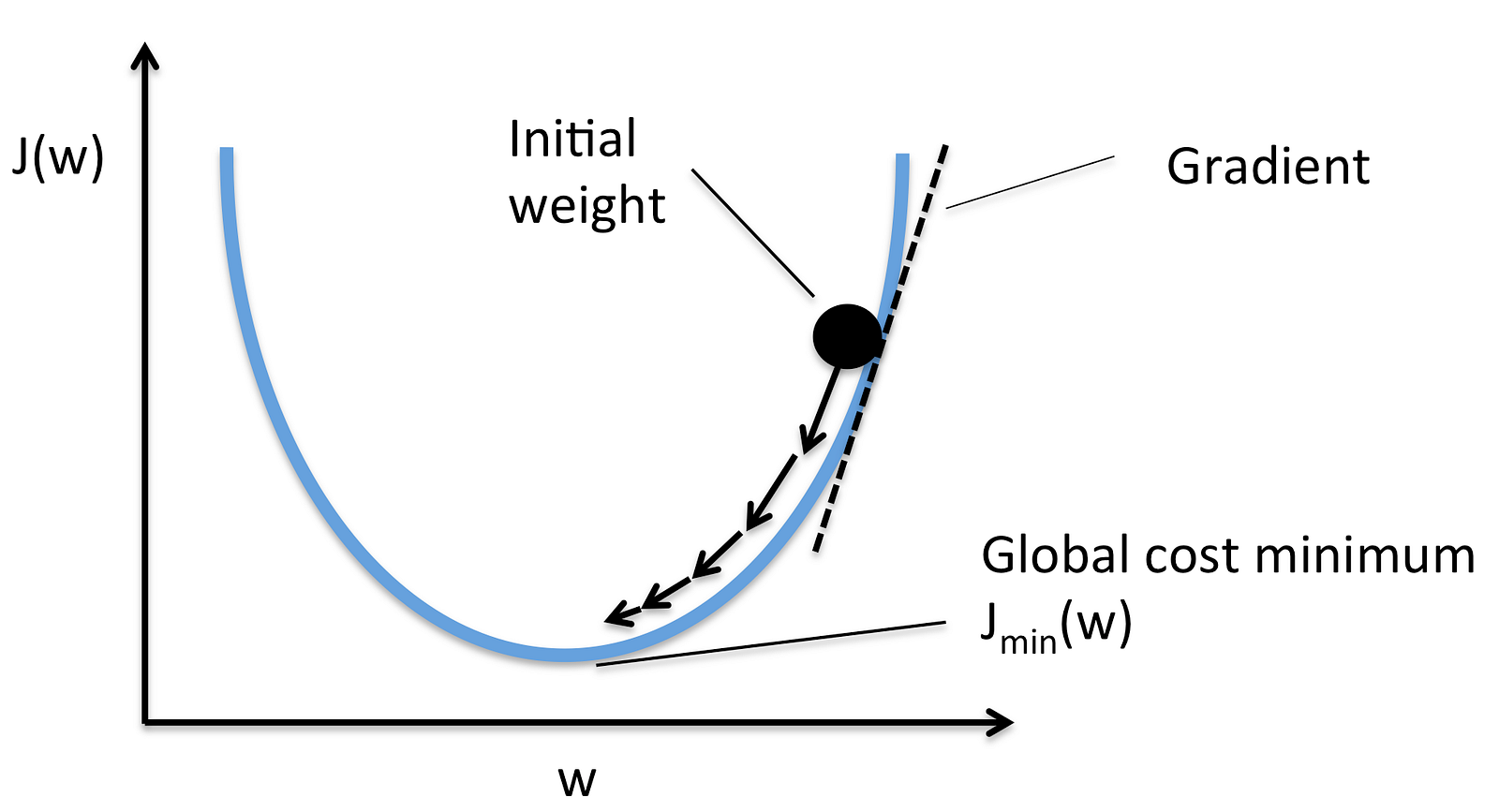

"**(Batch) Gradient Descent:** Based on the observation that the negative gradient denotes the direction of steepest descent. The learning rate $\\large \\alpha$ denotes how far to go in such direction.\n",

"\n",

"Moreover, the gradient is approximated using all the samples, i.e.,\n",

"\n",

"$$\\large W = W - \\alpha \\nabla J(W) $$\n",

"\n",

"where \n",

"\n",

"$$ \\large J(W) = - \\frac{1}{m} \\sum_{i=0}^m [y^i \\log(A_2^i) + ( 1 - y^i) \\log(1 - A_2^i)] $$\n",

"\n",

"\n",

"\n",

"\n",

"\n",

"**Stochastic Gradient Descent:** In this case, the gradient is approximated using a single random sample, i.e., \n",

"\n",

"$$\\large W = W - \\alpha \\nabla J(W) $$\n",

"\n",

"where \n",

"\n",

"$$ \\large J(W) = - [y^i \\log(A_2^i) + ( 1 - y^i) \\log(1 - A_2^i)] $$\n",

"\n",

"\n",

"\n",

"**Mini-Batch Gradient Descent:** In this case, the gradient is approximated using a subset of the samples, i.e.,\n",

"\n",

"$$\\large W = W - \\alpha \\nabla J(W) $$\n",

"\n",

"where\n",

"\n",

"$$ \\large J(W) = - \\frac{1}{M} \\sum_{i=0}^M [y^i \\log(A_2^i) + ( 1 - y^i) \\log(1 - A_2^i)] $$\n",

"\n",

"and $\\large M \\ll m.$\n",

"\n"

]

},

{

"cell_type": "code",

"execution_count": 5,

"metadata": {},

"outputs": [],

"source": [

"# Train the logistic regression classifier\n",

"lrc = sklearn.linear_model.LogisticRegressionCV(cv=5);\n",

"lrc.fit(X.T, np.ravel(Y.T));\n"

]

},

{

"cell_type": "code",

"execution_count": 6,

"metadata": {},

"outputs": [

{

"name": "stdout",

"output_type": "stream",

"text": [

"Accuracy of logistic regression: 55%.\n"

]

},

{

"data": {